This blog post continues a pattern of reporting we began at the end of academic year 2015-2016 when we took a deep dive and shallow swim through the task usage statistics in Eli Review. These course analytics are available to every instructor, and we send cross-course reports to all the institutions who subscribe as well as by request.

Task usage is the needle we watch as a company to know if we’re achieving the goal of changing for the better the way writing is taught in the US. In his keynote address at Computers and Writing 2016, Eli Review co-inventor and Associate Provost for Teaching, Learning, and Technology at Michigan State University Jeff Grabill explained Eli Review’’s origins a company this way:

Our argument is that if we provide a high quality learning technology—fundamentally a proven pedagogical scaffold + analytics—and the professional learning to support highly effective teaching and learning, then we will be a good partner for others.

And we measure ourselves based on our ability to help students and teachers learn. . . . Moving this needle is a bottom line measure for us.

The number of review tasks and revision plan tasks per course indicate the extent to which instructors are able to embed small bits of writing, targeted peer feedback, and reflective decision-making about feedback into their curriculum and context. Although we provide open-source sample teaching materials that can be added to any course through the task repository and consulting services to help institutions/instructors build a sandbox of ready-to-load tasks, most instructors design their own tasks. Task count is then a strong indicator of instructors’ investment in the rapid feedback cycle pedagogy that the app makes easier and more transparent.

At the end of Fall 2016, we see three headlines from task usage data across the app:

#1 Task usage is on-track to exceed 2015-2016.

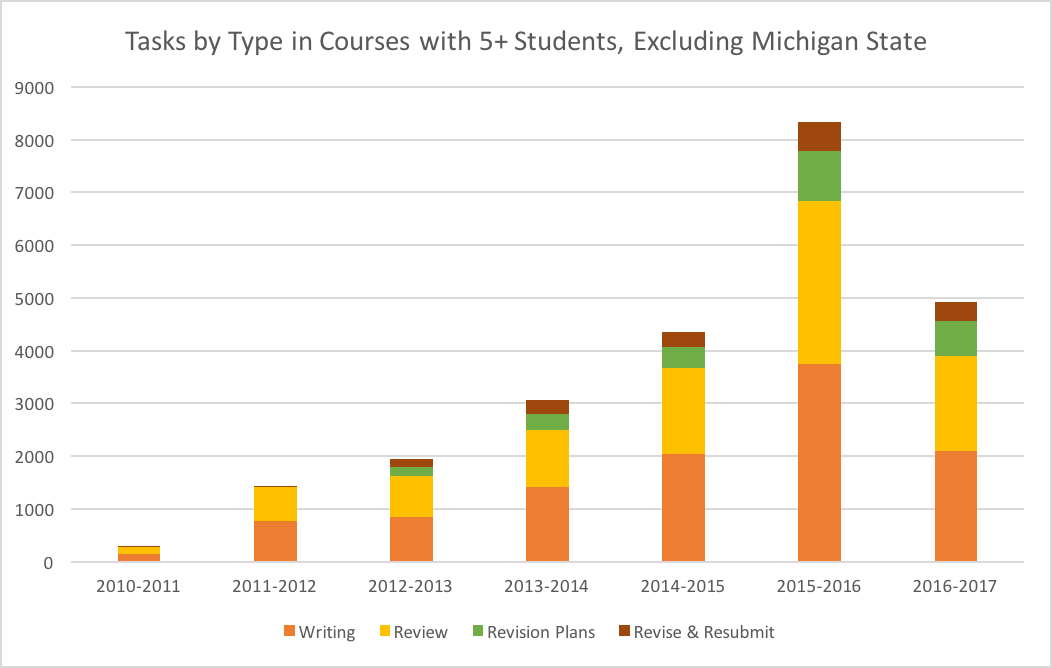

If Spring task usage per course doubles Fall’s as usual, 2016-2017 will again exceed the previous academic year, continuing year-over-year usage increases. The chart belows shows the total task use by all institutions, except Michigan State University. Because of its size and maturity with the pedagogy, MSU skews the data. Across the other institutions, task usage is holding steady at 36% review tasks with a slight increase to 14% revision plan tasks.

What do these tasks counts show? Students are giving more feedback. Peer response encourages students to name and practice the moves in their repertoire. Students are receiving more feedback, so they have real readers thinking alongside them more often in the writing process. And they are spending more time on revision. More time spent reviewing and planning revision means more time spent in the zone of proximal development learning to communicate about their work in terms of goals, options and next steps. More time spent revising means more learning. These task counts give us confidence that instructors are using the app to create feedback-rich environments, perfect conditions for better writing and better writers.

#2 PD investment has a measurable impact on review and revision plan task usage.

Last year, we expanded our professional development services by offering free monthly online workshops, and just over 150 instructors have enrolled. Many of the instructors in those workshops began using Eli this academic year.

Also, we partnered with schools in different ways to facilitate extended conversations about peer learning for this fall:

| Type | Participating Institutions/Instructors |

| Regular, campus-specific online office hours |

|

| Writing Across the Curriculum Inquiry Group |

|

| On Demand PD Workshops |

|

| Sandbox and Curriculum Consulting |

|

| On-Campus Keynotes and Workshops |

|

| Research |

|

This mutual investment in professional development led to more instructors assigning 5+ or 9+ reviews per course:

| Fall 2016 | Professional Development Participation | No Concerted PD

Participation |

| Total Institutions | 24 | 22 |

| 55%+ of courses enrolling 5+ students assigned 5+ reviews | 20 | 4 |

| 10%+ of courses enrolling 5+ students assigned 9+ reviews | 14 | 3 |

When instructors work with us and have the conditions necessary to redesign their courses, the amount of feedback opportunities in their courses goes up, sometimes dramatically.

#3 Local faculty leaders have a huge, direct impact on usage.

We have a dozen of these stories, but two standouts in this Fall’s data.

First, the University of Vermont had 20 courses taught by 10 instructors—8 new to Eli, most outside of English. Susanmarie Harrington and Libby Miles coordinated face-to-face meetings, collaborative work-time, a resource center in Blackboard, and several opportunities for our team to present. Their efforts lead to atypically deep use in the first semester:

- 11 courses included 5+ reviews;

- 4 included 9+ reviews;

- 9 courses included 1-4 revision plans; and

- 5 included 5+ revision plans.

Second, San Francisco State University is the only institution with more than one instructor who has 100% of courses assigning 5+ reviews. In fact, six SFSU instructors used Eli this term, and 3 assigned 13 reviews and essentially a revision plan for all but one or two reviews. SFSU is leading the way in making the full cycle of write-review-revise tasks standard in their courses. No doubt, John Holland’s persistent, thoughtful discussion about how Eli has helped him meet the goals of SFSU’s course redesign efforts for hybrid courses inspires his colleagues and us.

Bottom-line: If more feedback and revision is your goal (and it damn well should be!), join us.

The Fall 2016 usage data tells us that, as a company, we’re on track because the majority of the institutions and instructors we work with are increasing peer feedback and revision. That increase is the foundation for better writing and better writers. And that, quite simply, is what we’re all about.

If you’re interested in learning more about including Eli Review in your 2017 courses, here are some starting points:

- Join our newsletter or follow us on Facebook and Twitter.

- Be a student in Eli in our free online workshops for instructors

- Explore our Instructor Resources

- Brand new instructors can use Eli for free for 2 weeks

- Contact us to talk more about next steps