Teacher Profile: John Holland wanted to teach his first hybrid class the way he’s always taught—using multiple drafts and lots of feedback. When a colleague suggested he try Eli Review in December 2014, John dove right in and quickly found that Eli’s digital scaffold for review and revision transformed how he planned, facilitated, and coached peer learning in his new hybrid courses.

This dialogue is a synthesis of extensive email and voice correspondence between John and the Eli Review team (specifically, Melissa Meeks and Mike McLeod). The dialogue shows how John’s insights moved him from uninitiated to power user, from tentative to confident in his use of Eli with his classes.

Melissa Meeks [Director of Professional Development, Eli Review]: John, you recently described Eli Review to your colleagues at San Francisco State as “the center of gravity” in your newly redesigned hybrid courses this past spring. What do you mean by that?

John Holland [Lecturer, San Francisco State University]: Our syllabus states that students will learn to “revise mindfully, refining ways of giving and using feedback.” To accomplish this objective in our course, I [needed] to build weekly peer feedback components while finding a way to both model effective feedback and provide practice, all in an abbreviated time frame.

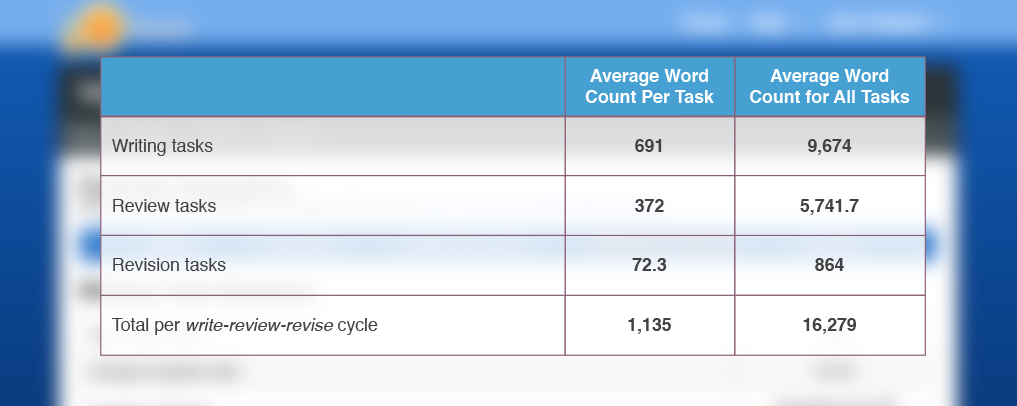

John: Eli Review solved my design dilemma. . . and managed to increase the words students [generated] on a weekly basis via their engagement with and connection to the writing of their classmates. Using Eli Review [resulted] in peer response moving from reading and talking about writing to reading and writing about writing.

Melissa: So, students read more drafts, wrote more comments, and wrote more metacommentary. It sounds like Eli made peer review work better.

John: Eli became much more than a tool for periodic peer review day, an activity that occurred every two or three weeks in traditional courses. In this course it became an everyday occurrence. Students used Eli to try out ideas for topics, work on thesis statements, work with sources, and always write to connect with an authentic audience. I found that Eli helped them become critical readers of their classmates’ work, learning to give feedback that emanates from genuine engagement with the text.

Melissa: The Eli team talks a lot about reviewing shorter bits of writing at all phases in the process, so I’m glad you found that strategy helpful. What makes you confident that students became better, more “genuinely engaged” students through their work in Eli?

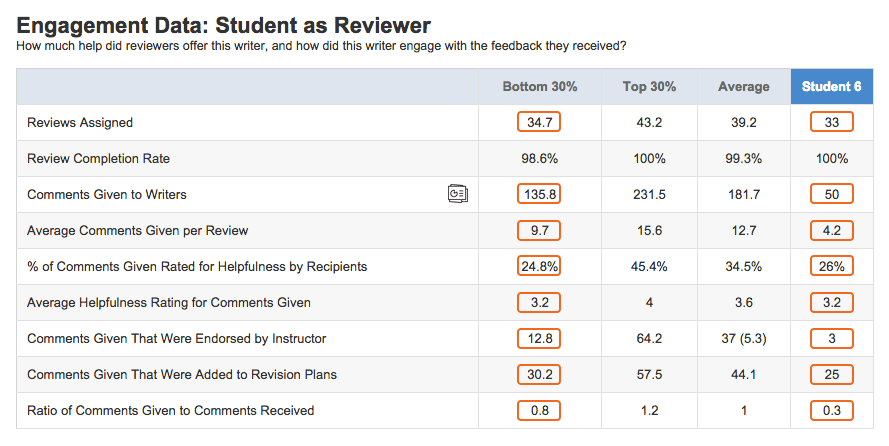

John: A particularly weak student came to my office [in March] and as part of her litany of woes complained that none of her classmates were commenting to her or being helpful on Eli Review. I pulled up her account and pointed out that she had received 70 comments so far, 20 of which she said were helpful and 17 of which I had endorsed, none of which became part of her revision plans or revised essays. This really gave us something to talk about in the remainder of our conference.

Mike McLeod [Head of Product, Eli Review]: My colleagues and I live for moments like this when we get to hear stories from instructors, particularly when Eli’s analytics show them something—either confirming something they already knew, or exposing something they didn’t know—and especially stories of data-driven conversations with students.

John: Well, here’s another one for you. I had a quiet student this term [who broke his silence because of Eli]. I wasn’t too worried about him because he did submit his work on time, and he always participated in giving and receiving feedback on Eli Review. He wasn’t stellar, but he participated. His writing topic was far too general as the semester began. I had difficulty getting him to focus on a specific example of that topic to explore in his semester-long project. He was getting the same sorts of feedback from peers and he slowly started to develop a better focus in his weekly drafts. This was all good, as they say.

But an epiphany, perhaps for both of us, occurred the week after spring break when this reserved student, who had not spoken to me before, came up to me to complain about Eli Review, rather, his classmates on Eli Review. He complained that he thought that his classmates still had “spring break brains” and that they were not giving the kind of helpful feedback he had been getting before the break. He thought that I should say something. I smiled inside because I can deal with that sort of complaint.

Melissa: So, you’re taking students’ complaints about unhelpful comments as a good sign?

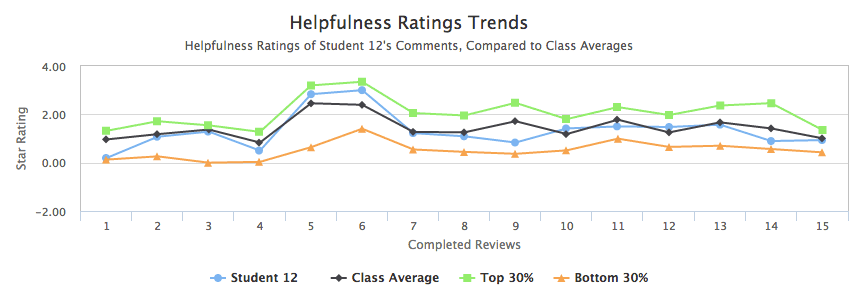

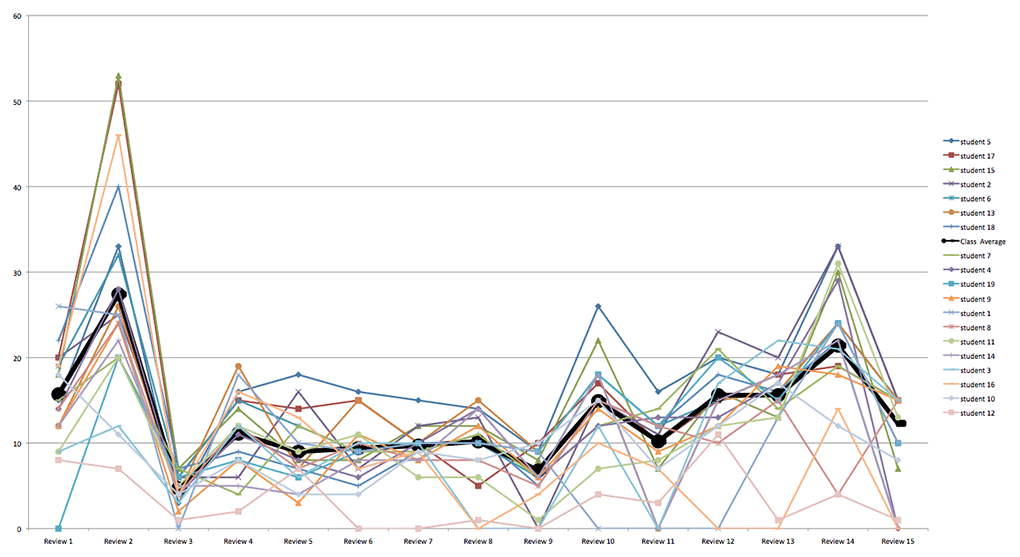

John: Indeed, I looked on Eli Review and the feedback, overall, had slipped. I didn’t focus on this particular student, but I did intervene and refreshed collective memories about what helpful feedback looks like. [I repeated an exercise we’d done earlier in the semester in which I talked through their] comments, noting all of the vague, non-helpful comments. Quite eye opening for them. And it elevated students’ expectations for peer review. They talked about how helpful the feedback was/wasn’t all the time.

Mike: The work that you’re doing helping them learn to differentiate helpful from unhelpful feedback is exactly one of the pedagogical imperatives of the platform. It’s definitely eye-opening for them for students (and often instructors) when that ephemeral feedback suddenly becomes visible.

John: I saw a dramatic increase in helpful comments, which is most apparent when comparing student who stuck with Eli the whole class and those who didn’t. As in any class, I had some “disappearing students” who “reappeared” two or three weeks before the end of class. They had been mostly absent from Eli Review during what I would call the critical learning period from weeks two – twelve. They joined Eli Review feedback cycles, and I would be overly generous of me to call their feedback helpful. Their comments to classmates looked very much like the sorts of feedback most were giving in the first week of class: great work, I like your ideas, correct your grammar mistakes, and so forth.

Melissa: Sounds like a research study to me! We’ve always said that Eli helps instructors teach reviewers to give more helpful feedback, and your observation bears that out. Have you seen any other effects of teaching students to offer helpful feedback?

John: Interestingly, these helpful comments have transferred to my online discussion forums where I set up similar language to guide their discussions. I am seeing some of the most vibrant interactions that I’ve ever seen in working with discussions. I can’t really ascribe a direct cause-effect relationship, though because I have designed a new course, online workshop oriented, student centered topics, with many moving parts.

Mike: I know you’re hesitant to attribute that rise to any one factor, but our claims with Eli have only ever been pedagogical–as a stand-alone piece of technology it really doesn’t do much. In the hands of a teacher who’s crafted a solid assignment with clear criteria and focused on the write-review-revise cycle, it can be really powerful.

John: What is it that Jeff Grabill says? “You can’t teach what you can’t see.”

John: Eli has changed what I see and when I see it, so it’s changed what I teach and when I teach it, which I think is now much closer to those teachable moments teachers strive to spot. Eli also puts me much closer to those teachable moments we teachers strive to spot. I can catch them just in time and in the space of their drafts/feedback.

Melissa: Catching students at teachable moments requires a lot of you as instructor, even with Eli’s learning analytics. What strategies have you learned about coaching students just-in-time?

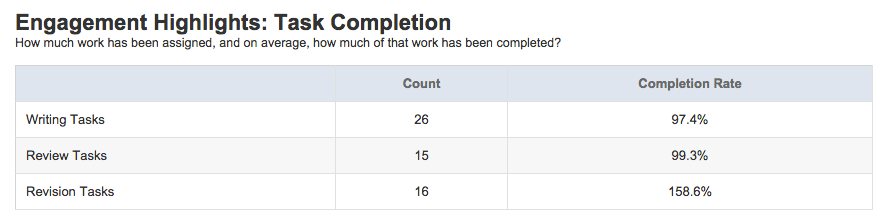

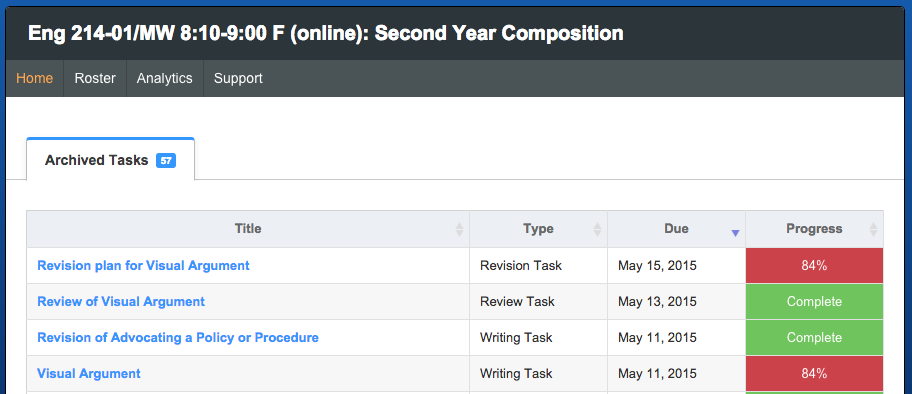

John: The biggest lesson I have learned this semester has to do with engagement, not the engagement in Eli’s analytics, but engagement at the front end: Those students who don’t even submit an assignment. They would be a problem in any class. But they can certainly become a bottleneck in Eli Review.

Mike: You’re talking about what some teachers call the “delinquent student” issue, or what others less politely call the “deadbeat writer” problem. That is, a student is assigned to a review group who has not completed the writing assignment. Online or face-to-face, reviewers can’t review drafts that haven’t been submitted. The easiest way to address that issue in Eli is to drag the delinquent writers out of their review groups and back into the “ungrouped” box or into a special group for writers who didn’t turn their work in.

John: I have definitely learned to wake up early the day an assignment is due and remove those who didn’t submit a draft from the review cycle. This term, I docked students ten points in my course participation grade and let them fend for themselves on revision that week. This is working out because these students tend to shuffle into my office expressing sorrow that they aren’t getting feedback for that week. And in most cases, after a firm admonishment and a touch of encouragement from me, they submit on time the following week.

Next time, I’m thinking about having them earn the points rather than deducting—positive motivation rather than negative. Assessment of engagement is something I am working through as I plan new courses. I am talking specifically here, not about Eli’s notion of engagement, but my need to just getting students to submit their work on time — engagement up front.

Melissa: Your experience is that Eli holds students accountable for their work as reviewers and as writers. Has that accountability had any effects?

John: I have brought some students back from the precipice simply by sitting them in my office, opening their analytics screen and showing them how they compare to others in the class. In all but two cases, I saw engagement in Eli Review’s analytics start to rise.

John: My big message to the class so far has been that in Eli Review Deadlines Matter. I tried to build community with the idea that we are all responsible to each other in a way that we might not have been in previous f2f classes, that our classmates cannot do their revision plans if we haven’t done our work. Eli helped students viscerally experience how their contributions and delinquencies contributed to the whole class.

Melissa: We started this conversation by talking about Eli as the center of gravity for your course because of the way it structured tasks, simplified coordination, and thus sped up the process. It also seems that Eli was the center of gravity in that it helped you and students know who was pulling their weight.

John: Certainly. I have a very strong sense of my student’s overall [capabilities]: My weak writers are weak reviewers and weak engagers. This is painfully apparent in all the analytics. Of course, this isn’t news to me. My intuition of their capabilities has always guided mid-term shuffling of peer groups to better balance things. But now I can look at the data on a screen, confirming my intuition and even revealing things I couldn’t have seen in the old f2f peer groups.

Melissa: Do you think only the strongest students benefit from the feedback-rich environment you’ve created in Eli?

John: In classes that I teach I often have strong and weak students, but no middle to work with; working in Eli Review, I can micro manage groupings more effectively, earlier in the course than was possible when relying upon my simple intuitions. I could see very early who was strong and who was weak quite early in the course with Eli Review. This micro management is something that I can do much more effectively in future courses.

About a third of my students have been giving minimal comments and that, I think, has more to do with my set up and instructions than any flaw in the system itself. I also have a healthy number giving extensive comments.

John: I see potential in the way that Eli might help a struggling student move toward a successful trajectory in the ways that I manage groups, in the ways they can see how classmates give feedback, in the ways that they become better critical readers. One of my weaker students, still hanging in there, who said in a weekly reflection that “the Eli Review feedback just gets better every week. I got so much help this week that I knew exactly what to do in my revision.”

Melissa: That’s really wonderful. You’ve put a lot of time and effort into using Eli effectively with your students and in your new course design. I know there’s a lot you’ll change. What’s your best advice for those instructors who just have heard about Eli and want to give it spin?

John: Let go of preconceived notions you hold of how to structure peer response in your class. Your old ways of working won’t carry you far with this online scaffold. If you sign on to Eli Review, you have to sign on to the whole package, and that means re-thinking your entire course structure because this system demands daily, not periodic, feedback cycles. Two weeks into my course I had to completely revamp my weekly plan once I realized what Eli Review was capable of adding to my toolkit. Be willing to let go and dive in.