Eli Review’s Analytics show trends in comment volume. In this post, we will demonstrate how to access comment volume data and suggest ways of interpreting it.

Jeff Grabill likes to say that “you can’t teach what you can’t see.” The first thing a teacher needs to see is who is engaging in the activities and who is not. Students who are doing the work are learning; students who aren’t doing the work aren’t learning.

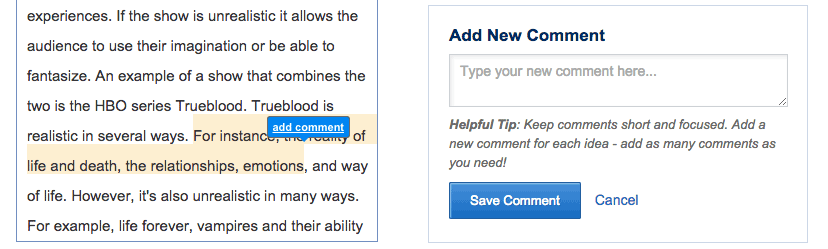

Eli is designed to help students learn to give more helpful comments by providing instructors the information they need to better coach reviewers. Students improve because instructors can see who said what to whom in the comments exchanged during review, helping students recognize exemplars. If instructors have enabled comments—one of many response types that instructors can choose from when designing a review task in Eli—reviewers can make as many comments as they want. If the writing being reviewed was composed in or pasted into Eli, reviewers can connect those comments to a passage of the text.

Eli Review is designed to make learning visible. Instructors can see comments in a number of ways:

- a live-feed of comments as reviewers work,

- a post-review report showing student engagement and comment digests, and

- course- and student-level analytics that can show trends and patterns over the duration of a course.

This post will focus on how to use Eli Review’s reports of comment volume to better understand student learning, starting with which students are engaged in review and revision and which student may need extra help. This graph includes a download option that will allow you to bring your data into other platforms, like Microsoft Excel.

Download Your Trend Data

If you’ve already assigned students a review in an Eli Review course, you already have trend data you can work with:

- Login to app.elireview.com

- From the instructor dashboard, click the name of your course.

- Choose Analytics.

- Choose Trend Graphs.

- From the dropdown for Trend Graphs, choose Comment Volume.

- Scroll down and find the options to Download Table as CSV.

If you haven’t taught with Eli before, you can follow along using this example CSV download from a real class; we’ve redacted all identifying markers.

NOTE: In Eli, you can access comment volume charts for individual students by clicking their names in the trend graph or through the Course Roster.

Transforming the Data

Now, you’ve got a file that you can open and manipulate with your favorite spreadsheet program. Here’s how we manipulated ours using Microsoft Excel:

- Open the downloaded CSV in Excel.

- Add a Total column at the end that sums all the comments per row (use a simple SUM formula).

- Sort by the Total column so that the student who commented most is first and the student who commented least is last (sorts are also straightforward).

- Add filters to the headers (here’s a simple tutorial on filters).

- Bold the font in the “class average” row so it’s easier to see.

With filters in place, it’s easy then to sort the data in these tables. For example, you can filter alphabetically, or you can filter by volumes.

Making charts: For this investigation we’ll be using charts that Excel can create from our downloaded data. In Excel, you can insert a chart by clicking the “Insert” tab and then choosing your chart (here’s a detailed tutorial on creating charts).

For our purposes, we’ll make Marked Line charts. Make sure the data has the series showing for the students; you may need to “switch rows/columns.”

You can download our sample Excel file with these graphs already created (note that it’s now a .xlsx file, no longer a .csv because a csv won’t support these advanced transforms).

Asking Questions of the Trend Data

We are focusing on just one data point here—comment volume—so what we can deduce from it is necessarily limited. Luckily, as teachers and researchers, we never have to use comment volume in isolation. But it is helpful to dig into one of these metrics in detail to learn how Eli’s reports and data downloads can help teachers find patterns, ask questions, and identify which students may need extra help.

Contextual comments reflect reviewers’ reading skills as well as their engagement with the process. Motivated reviewers who notice many ways in which a draft meets or diverges from the criteria are likely to comment more; less motivated and/or less skilled reviewers are likely to comment less, perhaps because they don’t read the draft according to criteria in the same ways that stronger reviewers do.

Comment volume can make visible reviewers’ reading skills and/or their degree of effort. By thinking about what comment volume could tell us about learning, we can ask good questions about comment volume data and identify relevant interventions.

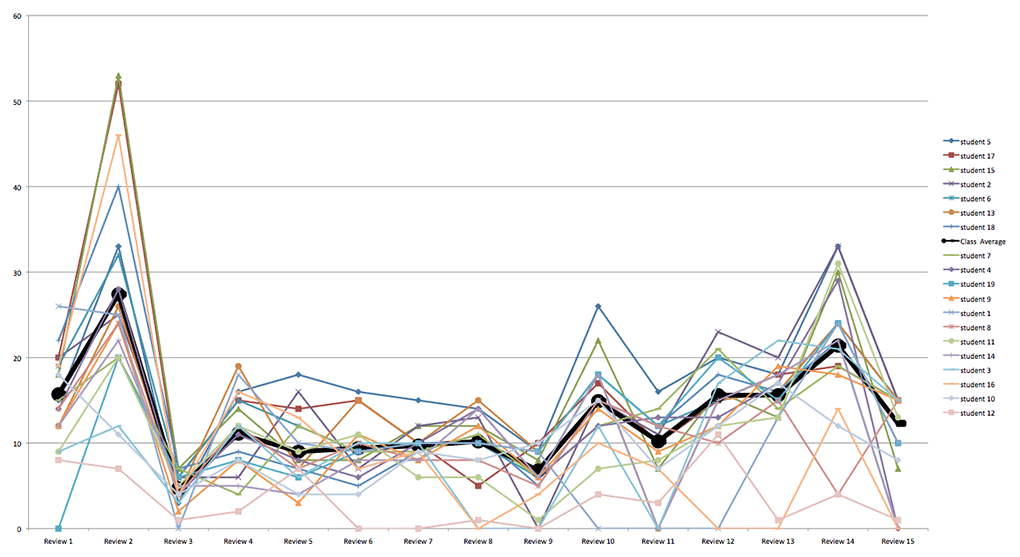

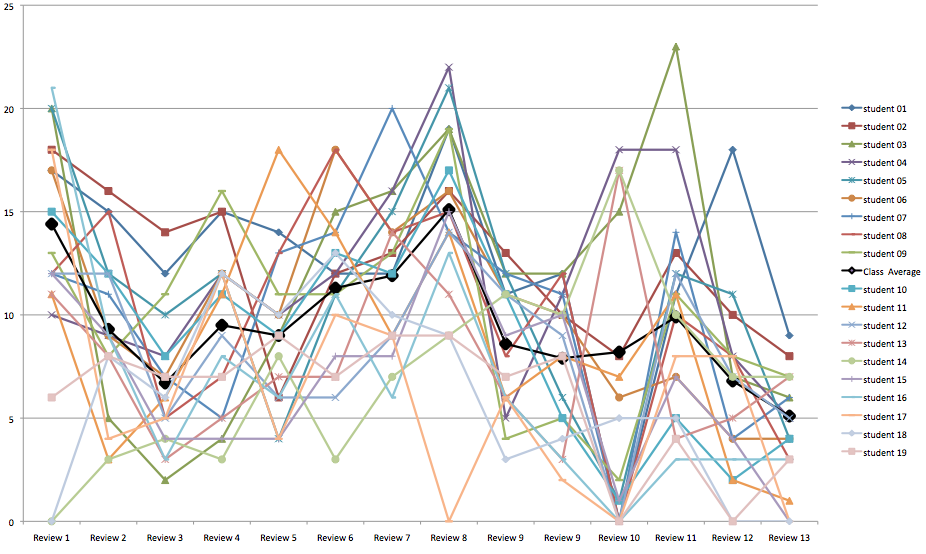

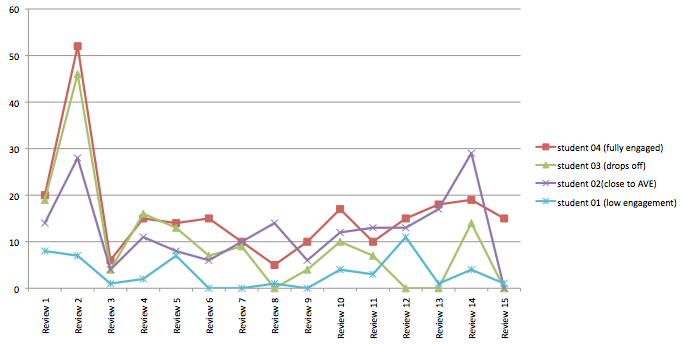

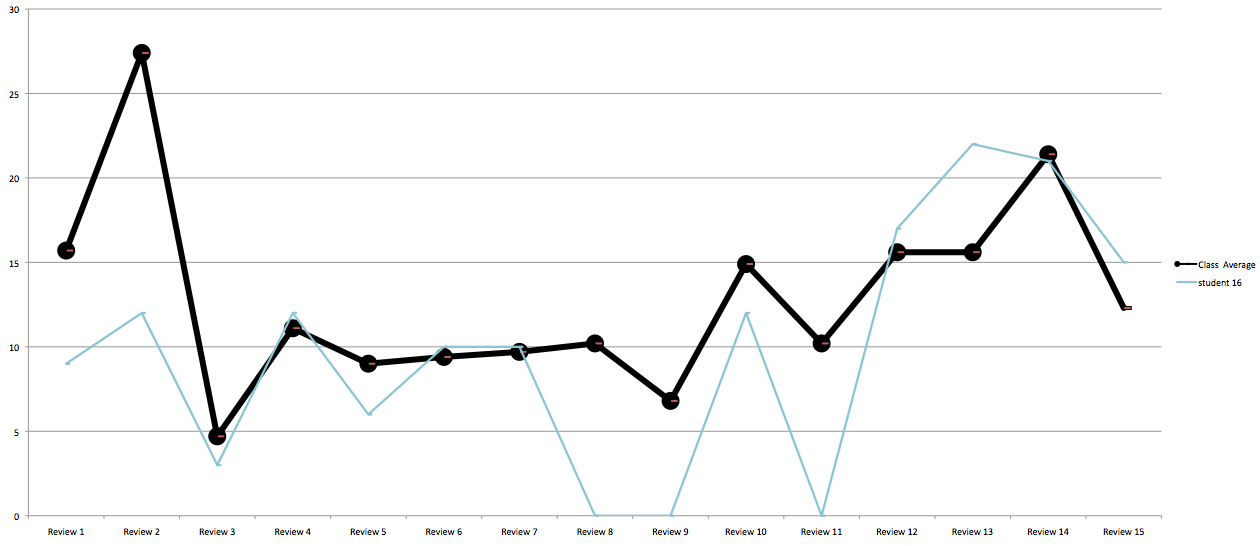

The next two exhibits come from two different classes at different universities. Notice how the bolded class average lets us distinguish between high and low participants.

Students in Exhibit 1 made roughly the same number of comments, though one or more students did not participate in over half the reviews. The class average reflects this inconsistency. In exhibit 1, the class averages separates 7 top contributors from the remaining 13 students. If the bottom few students are left out, the spread between top and low contributors is around 10 comments in most reviews. There’s a few students, then, who might need help getting engaged and who might benefit from knowing how their comment volumes differ from peers’.

Exhibit 2 shows more erratic comment volumes, but most students participated in all reviews, so the class average separates 9 top contributors from the 10 remaining students. This class average is also a median. But, look, the difference between the top contributor and bottom contributor is at least 10 comments and often 15 comments. Top participants are giving around 20 comments while low contributors are giving around 5. 15 comments is a substantial difference in effort, and calling students’ attention to the range could help narrow it.

Exhibit 1 and 2 are dizzying because they show full classes. If we look more closely within a single course at just a few students, we can see distinct trends in students’ comments. Excel makes it easy to show and hide data in our charts with a simple filter.

The trends in Exhibit 3 make for easier stories. Student 4 is consistently at the top in comment volume, and Student 1 is consistently at the bottom. Student 2 follows the class average, but student 3 fizzles after a strong start. Is Student 1 low on motivation or do the spikes later in the term indicate stronger reading skills in detecting passages that merit comments? Does Student 3 lose motivation, perhaps because of a complicated life or due to frustration with peer review?

It certainly seems like a complicated life at midterm affected Student 16 in Exhibit 4, but this student rebounded with solid effort toward the end. Was it enough or too little too late?

These visualizations of comment volume raise a lot of questions.

- To what extent are comment volumes good indicators of students’ ability to identify and respond to passages where revision is needed?

- Are comment volume trends showing instructors something valuable about students’ reading skill and motivation as they are designing interventions day-to-day?

- By talking about such patterns, can instructors motivate more engagement?

- What’s the best way to include comment volume in a summative assessment of engagement with peer review?

We need rich details about students and classes in order to figure out how comment volume relates to learning.

We’d like to invite you to conduct classroom research around the meaning of comment volume in Eli Review. As you work with this data, you’ll probably find better ways to visualize and interpret it. We hope you’ll share your insights with us and others. We’d love to see your charts on our Facebook page or posted to Twitter with our #seelearning hashtag.