The Teacher Development series has, so far, discussed the importance of feedback, strategies for designing effective reviews, and methods for teaching revision. But important questions remain, particularly:

This module looks at how writing instructors can use formative feedback data to gain insight into student progress toward learning goals. It will look at how to derive meaning from qualitative and quantitative review data and how to use that data to deliver timely and useful formative feedback to writers where it can still make a difference - in those crucial moments of revision planning.

The central argument of the Professional Development series is that the most important component to learning to write is feedback:

Feedback that is formative helps writers make adjustments that improve their writing and learning. Formative feedback is distinguished from the other type - called summative feedback (such as grading) - in two important ways:

Summative feedback is important as a measure of performance that helps both students and teachers understand what a student has mastered and what she still needs to work on. But summative feedback cannot stand in for high quality formative feedback. Research shows that writers often disregard or completely ignore summative feedback.

An analogy we might make is someone who has taken up running. One goal they might set for themselves is a race. The summative feedback they get from participating in a race might be their completion time at the finish line, while the formative feedback they get might be their heart rate or pace.

The clock at the finish line offers summative feedback that tells runners something about their performance relative to other runners and, if they keep track of their time, about their performance relative to past races. But that doesn’t substitute for formative feedback they might get during the race that can help them adjust and improve their performance. Feedback like this may also help runners learn pacing strategies to improve performance in later races.

Gathering high-quality formative feedback - feedback that is timely, consistent, and offers actionable advice that improves students’ writing - requires that teachers actively track learning while it’s happening. It should help instructors answer questions like:

Time is of the essence with formative feedback. Perhaps the most important question is: how can I know all of these details with enough time to do something about it?

You can’t get this information if you're only doing summative evaluation of student work. By the time you get those answers, the best moments to intervene and have a big impact on students’ learning have already gone by.

Bill Hart-Davidson, processing student feedback in Eli Review in real-time:The remainder of this module will discuss methods for designing writing activities to gather formative data and how instructors can use it once they’ve got it.

Section 2 will look at how peer review activities can yield real-time insights into student learning just as effectively as they can provide feedback to writers. Section 3 will look at how to read review data to identify patterns and trends and help target effective interventions. Section 4 will introduce a curriculum designed specifically to produce this kind of formative feedback, and Section 5 will look in close detail at one review activity - what it was designed to do, what data it produced, and how one instructor acted on it in real-time.

Making learning visible is especially difficult when teaching writing. Writing happens as much in the writer’s mind as anywhere else. When writing becomes external, it is usually in front of the writer, on a page or a screen, hard for others to see. Given this invisibility, how do instructors know when students are learning, or how to intervene when they’re not?

As introduced in Module 1, the powerful theory of learning that drives a workshop-based approach is called peer scaffolding. Students identify with others - co-learners or peers - and by following along, usually in a group setting, they see how their own performance can improve.

Think about how this works in a dance class, for instance. When students are all working on the same steps, a quick glance can help a student see when he is on the wrong foot and, crucially, how to get back on track. Meanwhile, at the front of the room, the instructor can sweep across the whole chorus line. She sees where she can “lean out” from those who can calibrate and self-correct, and where she needs to “lean in” for those who may need just a little extra individual help.

Dance instructors can do this because the moves we make when dancing are visible to others. The moves we make as writers, on the other hand, are less readily visible without some extra work. Writing teachers do these things routinely - asking students to read work aloud or trade drafts for instance - but depending on the methods we use, we might still only be seeing some of what is going on.

Seeing the big picture - that sweeping view of the class - is the opportunity for real change. Typically, gathering the data necessary to create this view requires substantial coordination by teachers and students. Instructors must gather mountains of paper (or digital bits) when attempting this process by hand. The costs in terms of time and effort are often too high.

The power and promise of formative data is the view of individual and group learning. What we can see, we can teach. The power of Eli Review is its ability to provide teachers with timely and actionable views of learning.

The first thing a teacher needs to see is who is engaging in the activities and who is not. This might sound straightforward, but consider walking into a room of students quietly working at their desks: what can you tell just by looking across the room?

There’s only so much activity we can see just by watching students work, and what we can see aren’t the most important indicators of learning:

| Questions | Easy to see? | Important to learning? |

| Who is writing? | Yes | Somewhat |

| Who is addressing the key assignment criteria in their writing? | No | Yes |

| Who is offering feedback to a peer? | Maybe | Yes |

| Who is offering feedback aligned with learning goals? | No | Yes |

| Who is struggling to understand what the criteria mean? | No | Yes |

| Who is revising? | No | Yes |

| Who is stuck and can’t quite see how to revise their draft? | No | Yes! |

We (and our students) need formative data on how students are meeting learning goals (e.g., traits) and how well they understand what they need to do next (revision). But we can’t teach what we can’t see - if our indicators of learning aren’t visible to the naked eye, we have to find other ways to see them.

The data produced by students for their peers during well-designed review and revision activities can also give instructors a real-time picture of these learning indicators, similar to the learning environment of the dance studio.

Surfacing learning indicators through review and revision activities is a little -- no, a LOT -- like doing research! Building a review can be a plan for gathering evidence. It's action-based research conducted in the regular flow of a syllabus.

Designing an activity should start like all research projects: with a set of questions. For writing teachers, happily, these questions are usually the same each time at a basic level (although they can be made more specific for specific writing courses):

These questions map directly to the review response types introduced in Module 2 (trait matching, scaled responses, and comments). Let’s look at those response types and how they can answer our key questions about student learning, starting with the Questions 1 through 4.

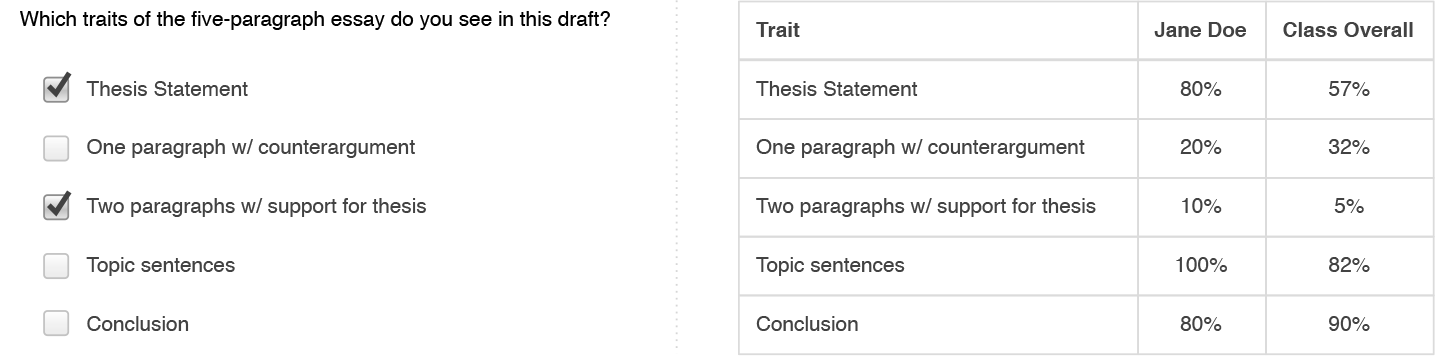

Research Questions: What are students doing in their drafts? What rhetorical moves that align with our learning goals actually were made? Which moves were not made?

Gathering Data: Reviewer responses to trait matching sets will tell us what moves are made, who made them, and who did not. Where the instructor agree with reviewer responses, we have strong evidence of the way students are understanding what each trait or move looks like and how to identify it in a text.

With a trait matching response type, reviewers select from series of checkboxes while instructors see indicators of where those traits are reported for individual writers or a whole class.

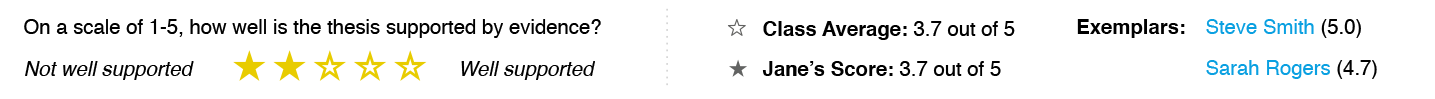

Research Questions: Who does the group see as having made these moves the best? Who struggled? Where students struggled, how could the moves be improved?

Gathering Data: Scaled items show who the group sees as providing strong examples of writing that meets the criteria; where the instructors agree with the peer-nominated exemplars, we have powerful resources for peer-scaffolded learning. With explanations provided by reviewers, we can also see the ways students are able to link criteria with specific examples.

Reviewers see a rating scale, but instructors see aggregate ratings for individual writers and the whole class, as well as exemplars of highly-rated writers.

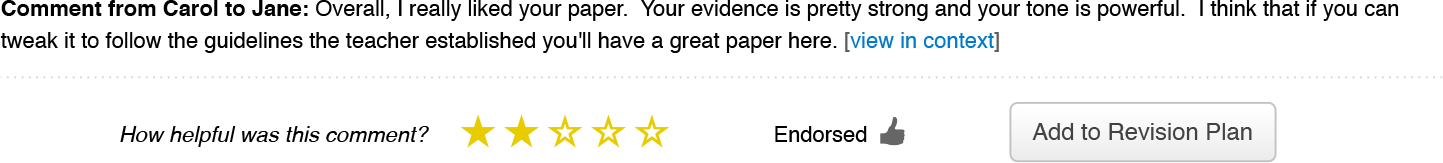

Research Questions: With feedback, are writers able to identify and address their own issues?

Gathering Data: Module 3 discussed how revision plans can give powerful insight into writer uptake from the feedback they receive, but the feedback they give during a review can be just as insightful. If students are prompted to give criteria-driven comments, their work as reviewers (the set of feedback they give to the writers they review) is a meta-discourse on their own ability to recognize those criteria and talk about them constructively.

Reviewer comments are aggregated and can be viewed as a whole, but individual comments can be rated by the recipient and added to revision plans, or endorsed by instructors.

Becoming a researcher in your own classrooms means looking for ways to make learning visible. You may need to identify learning objectives more specifically. You also may need to describe each one so that students can easily spot the moves in each others' work.

If our reviews are designed in a way that produces qualitative and quantitative data about student learning, the next challenge is figuring out how to decipher that data and use it to deliver useful, timely interventions for writing students.

Designing review tasks like a researcher not only helps instructors develop more criteria-driven activities but also provides clear indicators of student learning that instructors can use when making decisions about interventions. This is evidence-based writing teaching.

Approaching writing instruction as a researcher also means reconsidering what counts as evidence of learning. Specifically, it’s possible that the best indicators for student learning may not lie in their written drafts.

Instead, the best indicators may lie in the other artifacts of the writing process, particularly the writing they do about their writing - in reviews, in the feedback they give, their revision plans, or in reflections:

Instructors can find useful formative feedback in all the activities of this process. Without good data, teachers may spend a significant amount of time giving formative feedback that is not responsive to students’ individual needs. Because those individual needs are buried deep within the myriad artifacts produced by writers in most writing environments, instructors can find it difficult, if not impossible, to locate and respond to them all. Even identifying trends and tracking whether criteria have been met can consume more time than the instructor has to give; the best interventions require the most labor-intensive reading of student work.

Eli Review flips that scenario. It allows instructors to spend their energy responding strategically to problems revealed in formative feedback. Eli’s methods for gathering these artifacts and surfacing student engagement can lead to something amazing: Writing classrooms become like the dance studio where the instructor can clearly see the moves of the group and of individuals.

To follow the analogy, writing instructors can:

These moves, driven by formative feedback, let instructors provide “just-in-time” coaching. The best coaching equips students to learn from each other and the process, and research shows peer learning can be more powerful than teacher feedback. Eli is designed to helps instructors prepare students to give helpful feedback so that instructors can “lean out.” It also help instructors know where to “lean in” so that expensive individualized feedback is targeted and timely.

There are four powerful strategies for putting formative data to work in the classroom:

The following describe each strategy in detail. Keep in mind that strategies are highly contextual, and different learning goals require different outcomes, but these generalized strategies can be put to use in many different ways.

The “sweeping” glance of a dance instructor can be achieved in the writing classroom by looking at the results of trait identification sets or contextual comments. If the items reviewers were asked to identify are aligned with learning goals, the percentage of drafts with these can help to either alert or reassure the teacher.

Returning briefly to the trait table from Part 2, a clear priority for further instruction emerges here: only 5% of students are showing signs of having sufficient supporting paragraphs. We can see that students are relatively okay with topic sentences and conclusions, but they need targeted help with supporting paragraphs.

Note that this is one of the easiest moves to start using data, particularly using Eli Review for the first time. Reading the results of a trait identification set requires very little interpretation and provides immediately actionable feedback.

When instructors follow the real-time, evidence-based approach described in Part 2, trends and indicators become visible very quickly. Trends you can see are improvements you can teach. So how does this information get put to best use? With a review debriefing.

See the complete debriefing video with an overview from Bill Hart-Davidson.The idea of a review debrief is for the teacher to share, as an expert observer, what she has learned from the feedback exchanged. It can be very valuable for the students in our dance studio to hear, for example, that everybody needs to work on keeping their toes pointed. It can be just as valuable to hear that everybody is doing really well with regards to staying on tempo.

In classrooms that meet face-to-face, a debrief should take place immediately following a review or as soon after as possible, given the constraints of gathering, aggregating, and interpreting the results. In hybrid or online classrooms, a debrief can be recorded and distributed in an equally timely fashion.

In either case, instructors should not miss the chance to debrief a review prior to asking students to create revision plans. This way, the revision plan makes visible how well students have listened to the instructor's coaching.

In the dance studio, an instructor will sometimes pick one dancer to come to the front of the room and demonstrate a move. She may want to point to a particular detail - the way the shoulders are aligned, say, or the way the toe stays pointed. We can do the same thing in a writing course when we pull peer exemplars out to serve as points of departure for large group discussion.

In fact, using models and exemplars in a writing course can be even more powerful because unlike in a dance class, where there is often really one “right” way to do a particular move or sequence, in writing there are often many ways that work equally well. When we find exemplars, we can ask writers to explain their thinking. This provides the best sort of learning by example - an ancient technique for rhetorical learning the Romans called imitatio - wherein it is the overall framework or strategy that is repeated, but not the specific words.

Image taken from an 1867 textbook on elocution; here, the group does physical warm-ups to prepare for public speaking.

Image taken from an 1867 textbook on elocution; here, the group does physical warm-ups to prepare for public speaking.

When they come from feedback during a review, peer exemplars are not the choice of the teacher but are nominated by the students themselves. This is a great learning opportunity: instructors can frame a discussion of the examples by saying, “This is what the majority of you found to be an effective way to solve this particular problem...what did you see in it that made you rate it so highly?”

Model texts: Exemplars can surface by looking at the work of writers who were scored highly on rating or likert scales. By asking reviewers to explain their ratings, teachers have yet another chance to see in explicit terms how learners are making connections among the writing they are doing, the writing they are seeing from others, and the criteria.

Model feedback: If writers are asked to rate the comments they receive (as they are in Eli Review), both the instructor and the reviewer can see which comments a writer found helpful. In a group session, the instructor can call up highly-rated comments and discuss these as models, asking: “What makes them helpful?” They can also pause in the middle of a review to discuss helpful comments. Student comments often gets better while a review is still happening!

One of the most important things a teacher can do - and really, one of the reasons the teacher is needed at all in a studio setting where students learn readily from one another - is to identify when students need individual help. A teacher may want to:

A writing class is, in this way, more like a yoga studio than a dance class where the goal is to try and get everyone to do the same choreography.

The yogi wants to help each individual student improve their practice, down to the details of improving each move. Doing that requires good data, which comes from the ability to watch students’ practice carefully enough to find patterns beyond those visible in a single performance.

In the case of both the best performers and the struggling students in a writing course, performance on a single task may be a poor indicator of learning.

For the student who performs poorly, instructors want to know as best they can where the specific problem lies. They can find out by reading the feedback the writer gives to other students. If the advice is good - identifying claims, offering suggestions that would strengthen the evidence given - then the instructor can simply say: “psst...try taking some of your own advice!” If, on the other hand, the feedback to others shows confusion about the differences between claims and evidence, the instructor knows more precisely where this student could use some coaching.

The proficient student who aces a paper may not be learning much at all. As a teacher, seeing these kinds of performances is nice. But a dance instructor is not really interested in seeing someone with a perfect time-step tap away every time he comes to rehearsal. The instructor wants to push that student to learn something new, too, perhaps something his perfect time-step gives them a foundation to learn.

Sometimes insights from formative feedback will fuel additional rounds of "research" in our own classrooms - as we learn more about how students have or haven’t learned, we have to go back to the drawing board to respond to what we learned about their learning. Our instructional choices, this way, are based on evidence.

The next section includes an entire series of exercises meant to help students learn to make specific moves as writers. This set of activities was designed by two instructors to yield formative data about student progress.

With some planning and coordination, the feedback we ask students to give one another can be just as useful for helping us observe their progress toward achieving our learning goals. In this example, two instructors develop a curriculum in which they shifted their focus from providing feedback on drafts to measuring progress through quantitative and qualitative reviewer feedback and targeted interventions.

While the specific tasks in this curriculum are targeted at a higher-ed audience, the pedagogy behind their design is applicable at any level.

Bill Hart-Davidson and Jeff Grabill are two veteran instructors who collaborated on a writing course that needed to be taught in a compressed timeframe - a very abbreviated 5-week course rather than a standard 15-week course. To do this, they designed a series of exercises that emphasized the moves writers make and provided ample time for students to practice these moves in rapid write-review-revise cycles (it was this design challenge that led to the creation of Eli Review).

Their curriculum consists of two modules, each of which take just two weeks to complete despite building in a rich set of review and revision opportunities for students. The primary learning goal is to build familiarity with the six essential rhetorical moves of technical writing. These moves make up many of the familiar features we see in common technical writing genres such as instructions, user guides, tutorials, etc; they are:

The exercises Bill and Jeff designed offer students the opportunity for deliberate practice - that is, conscious focus on each of the key moves - with numerous repetitions focused on these moves. Focusing on these discrete moves that not only provides students opportunities to practice transferable writing skills, but also provides instructors a clear set of learning goals that are both coachable and observable in student work.

As described in Part 2, Jeff and Bill use a variety of response types in their reviews - trait identification, rating scales, likert scales, comments - to give reviewers discreet, criteria-driven prompts to guide their responding to writers. What they learned from the experience of working with this data, however, is that it provided them with powerful formative feedback that let them be “agile teachers”:

Their instructional design eliminated a lot of the guesswork of teaching, giving them real data about student uptake that they could act on immediately, even in the same session.

One of their writing assignments, the Children’s Nutrition Guide, occurs after writers have been introduced to and practiced the first set of moves (writing to guide action). This task gives a common technical communication challenge to writers: translate technical information for an audience of non-experts (in this case, children between the ages of 8-13). The aim of the task is to help kids make healthy choices in fast-food restaurants. Because the writers are addressing children, all three of the key moves in focus for this unit (defining, describing, and presenting options) are in play and are equally important.

Here are the instructions for the Nutrition Guide:

As an intern for KidsHealth.org, you’ve been asked to create a new resource that helps kids between the ages of 8-14 make good nutrition choices when they visit fast-food restaurants such as McDonalds, Burger King, etc.

Your task is to reuse and repurpose information already available on the site in the “Stay Healthy” section, especially in the Fabulous Food area.

Your guide should be something that kids can print out and take along with them when they visit a restaurant. This means it has to be short - like a card-sized guide when folded - and it has to be very focused on giving kids the information they need to make good choices.

You are free to use text and/or images from the site in your flyer.

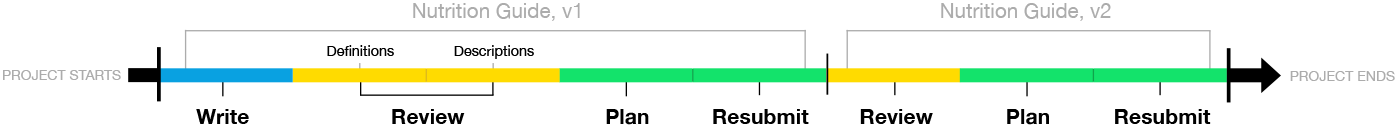

The assignment sequence Bill and Jeff designed takes student drafts and puts them through a sequence of three reviews and two rounds of revision, with an emphasis on review:

This next section will look in detail at the definitions review and how it was designed to help reviewers closely scrutinize texts for those moves, provide helpful feedback to writers, and formative feedback to Bill and Jeff that would provide insight into student progress and help them see how well students were understanding that move.

In order for an instructor to know if a particular exercise is having the intended effect - that is, to know if students are actually learning - the instructor must have some sort of formative feedback that will make visible students’ progress while still leaving the instructor room to effectively intervene where students need practice.

While this section elaborates on a specific exercise in the curriculum described in Section 4, the learning goals behind it demonstrate how instructors can build metrics to gather formative feedback into the review process and act on them to help their students improve.

As introduced in Section 4, the Children’s Nutrition Guide in Bill and Jeff’s technical communication curriculum is a writing assignment that builds on all of the writing moves that students have previously learned and practiced.

Before students produce a draft, they are given direct instruction in the moves of a particular exercise; in this case, the move of defining. Students then write with a clear sense of what that move looks like. They’ve seen examples, and they’re ready to practice.

Students' drafts reveal their ability to execute the moves. In the definitions review, Bill and Jeff are looking for signs of the following:

To answer those questions, they designed this review:

To answer the questions of "who's getting it?" with the defining move, the review has five response types: 1 trait identification set, 2 rating scales, 1 likert scale, and both contextual and summative comments. Each response type is intended to reveal a different insight into student understanding of defining:

Trait Identification: Read the draft and indicate which types of definition you see:

- Contrastive Detail

- Relational Detail

What Bill's looking for: "With the trait set, I’m asking reviewers to identify, within definitions, where writers have used two important kinds of detail. This is a check not only on the writers’ understanding, but also on the reviewers’ ability to recognize the difference between the two types. Contrastive details are often familiar once pointed out, but non-obvious to students learning to be more precise in their technical writing."

Rating Scale: how well has the writer used relational details in definitions to help readers make a connection with their own experience? (5 stars, explain answer)

Rating Scale: how well has the writer used contrastive details to help readers distinguish new ideas or objects from others? (5 stars, explain answer)

What Bill's looking for: "These two rating scales work together. They are, in research terms, a triangulating move to get a second set of data points regarding contrastive and relational detail. In these response items, I ask reviewers to identify who is using these features well and who is not using them at all, or as effectively as they could (hence the scale). Along with the trait matching data, these will give me a very clear picture of who gets this concept and where, as a group, we need to spend more time revising to improve definitions."

Likert Scale: This draft has terms or concepts that should be defined but aren't. (5 stars, explain answer)

- Strongly Agree

- Agree

- Neither Agree nor Disagree

- Disagree

What Bill's looking for: "This likert scale helps me identify how many of the drafts in the whole class still contain terms that need to be defined. If the percentage of 'Agree' and 'Strongly Agree' responses is high, we’ll take some time to talk about why they may have missed these."

Contextual Comments: Please indicate where there are terms or concepts that are NOT defined that you think should be.

What Bill's looking for: My prompt for contextual comments in this review is a narrow one, asking reviewers to point to terms that may need to be defined but are not in the current draft. This is a high-level concern for readers as well as writers: anticipating, based on audience needs, where additional detail may be needed.

Final Comment: Please offer some advice for the writer about how to improve their definitions.

What Bill's looking for: The final comment prompt asks for a synthesizing statement from reviewers: “how can the writer improve their definitions?” I am hoping to see in these responses how well reviewers combine the conceptual understanding of the two types of detail - contrastive and relative - in conjunction with an analysis of the intended audience. At this stage, it may well be the most important indicator of each students’ learning progress (even more so than their own written drafts!)

With the learning goals for this review laid out, and the review response types in place that should yield data from student reviewers, it’s time to actually see this review in action. The next section shows how an instructor can make decisions about student engagement and progress and act on them in real time.

This video was captured from a live session of Bill Hart-Davidson’s Technical Writing course in the Professional Writing program at Michigan State University in January 2015. These clips show how he framed the review for his students, how he interpreted the data they generated during their peer reviews, and how he used the insights he gained from that data to evaluate how they were progressing in their understanding of the defining move and next steps.

About Eli Review: Bill used Eli Review to coordinate this review process because it was designed to facilitate this kind of review and gather it for teachers quickly. Teachers can use analog methods and other digital tools to accomplish similar results, but they can be time consuming and require a lot of additional work that cuts into instructional time.

In the video below, you'll see Bill make the following moves to get his students started on the defining review:

While students are reviewing, Bill watches a live feed of their feedback in Eli Review, and he makes judgements about what he sees:

It’s important to note in the clip above, as Bill is making decisions about student progress, he is not doing so by reading their writing. Not yet. He is processing the feedback generated during the review process and using that as a window into their learning - it’s not instead of, he’ll still read their drafts, he just doesn’t have to do that first - he can teach right away.

Having processed the results from the review, Bill debriefs with his students about trends he observed and their next steps. Specifically, he:

Finally, with the review complete and students headed home, Bill breaks down what happened, addressing:

After class, Bill leaves confident that students have grasped the defining move and are well-prepared to make effective revisions. He’ll still read their drafts, and he will offer feedback on the revision plans they create to help them process their own review feedback. This kind of reflection completes the cycle we discussed in Module 1 that makes for such powerful learning results.

Writing teachers have long been limited to summative feedback to help them gauge whether or not their students have achieved their learning goals. What this method argues, and as Bill’s work with formative feedback has shown, is that writing teachers can design their review exercises to give them powerful insight into student learning. They can know for sure - writing teachers can make evidence-based decisions about student progress. As Bill says in the clip above, “no more guessing”.

Bill and Jeff's entire curriculum, including all of the prompts and review protocols, are free to reuse and adapt in the Eli Review Curriculum Repository.

When approaching review and revision activities like researchers, writing instructors can get a useful picture of student progress toward learning goals, which students are getting it and which aren't, and know where intervention may be most needed and effective.

Explore additional entries in this four-part Teacher Development Series to take these ideas further, or find examples of Eli Review in action and how to use it effectively.

Looking for ways to build a classroom culture rich in feedback and revision? We have activities and readings that can help you set students’ expectations and get everyone off to a helpful start:

Curriculum materials and activities:

Reading materials for students:

Elvert Barnes (perspective), N i c o l a (15216811@N06), Travis Warren (travis_warren123), David Thompson (david23), Laura Wasowicz, Fort George G. Meade Public Affairs Office (ftmeade)

These materials are licensed under a Creative Commons Attribution-NonCommercial 4.0 International License. This means you are free to copy and redistribute them in any medium or format and to remix, transform, and build upon the material, as long as you provide proper attribution to the authors (Eli Review, or Michael McLeod, Bill Hart-Davidson, and Jeff Grabill). Commercial use of this content is prohibited.