Eli Review and Computers & Writing have a long history. Bill Hart-Davidson and I first introduced Eli publicly at #cwcon 2010 in Purdue. Eli was still in its prototype stage when we introduced it – we hadn’t even added the “Review” to its name yet, and you can see in the slides from our 2010 panel that it hardly resembles the product we use today.

That panel was a test of sorts – we had an idea about how Eli could measure a set of student interactions and determine which students were giving their classmates the most helpful feedback. We engaged the attendees in an exercise: we gave them a set of student comments and asked them to identify the most helpful students, and then compared their findings against Eli. Our C&W colleagues helped us confirm that Eli could identify helpful students, and validated the idea that this would be helpful in the classroom. That feedback told us we were on the right track, which eventually led us to develop a patent and start a company to make Eli available to folks other than ourselves.

Two years later, in May 2012, Bill and I were on another Computers & Writing panel, this time at NCSU in Raleigh. Here we got to introduce Eli Review, no longer a stick-and-duct-tape prototype, but a soon-to-be-launched, publicly-accessible product.

You can view our slides from that talk here. We discussed peer learning pedagogy and led attendees through a brief feedback activity, as well as some of the technical infrastructure that made Eli work, but our purpose was to leave lots of time at the end to listen to how our scholarly community might imagine Eli’s future as a research platform.

We invented Eli Review first to facilitate peer learning environments, but it was obvious to us that the app also had the potential to support the work of scholars interested in investigating how students learn to write and how instructors learn about teaching.

We proposed this panel at #cwcon to discuss that possibility with our peers. We described the type of data Eli collects and suggested that we might build an application programming interface (API) that would enable researchers to ask questions of that data; after a long conversation, some of the questions to come out of that discussion include:

| Data Eli Review Values | Potential Questions to Ask of Eli’s Data |

|

|

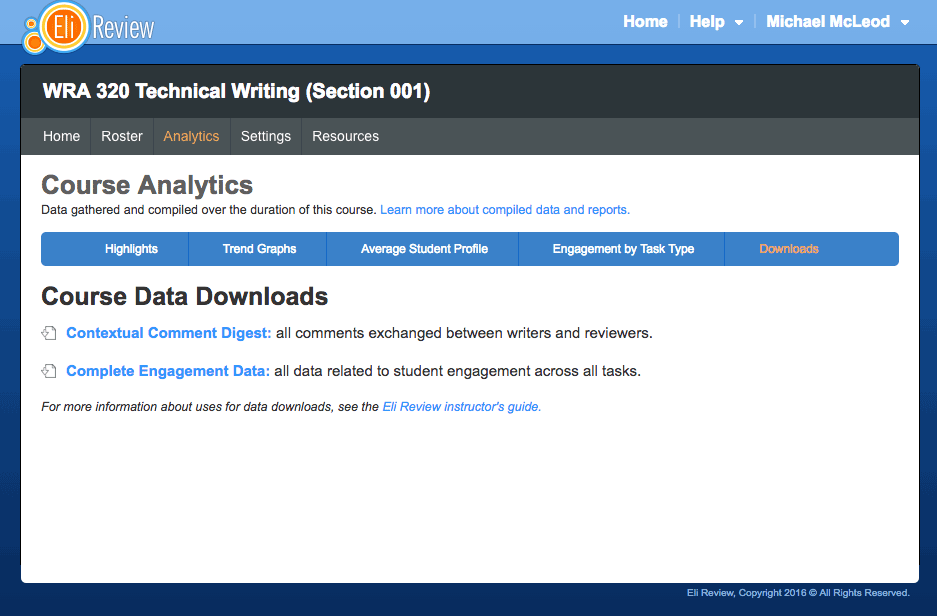

Flash Forward: In the 4 years since our panel at #cwcon, we’ve made it possible for instructors and researchers to answer their questions and do research using Eli Review data. While we don’t have a publicly accessible API, we’ve released several substantial features that allow instructors convenient access to the data produced by their students during individual reviews or over the duration of a whole course.

Since we’d designed our data to be rich and flexible from the beginning, we were able to release these features and make them retroactively useful to all previous courses. We’ve continued to release increasingly powerful features into the app and worked with researchers to develop custom exports for variety of purposes.

Since we’d designed our data to be rich and flexible from the beginning, we were able to release these features and make them retroactively useful to all previous courses. We’ve continued to release increasingly powerful features into the app and worked with researchers to develop custom exports for variety of purposes.

Since we began making student data easily accessible, instructors have been using it in their own courses but also planning larger studies across courses. Here are some researchers currently engaged in research projects with data from Eli Review:

- Nedra Reynolds at the University of Rhode Island is working to identify high-impact interventions by instructors teaching STEM students to be stronger communicators as part of an NSF-funded project called #SciWriteURI

- Ryan Omizo at URI is examining something writing instructors have long held – that peer learning can develop students awareness of and ability to talk about their writing strategies (their metadiscursive ability) – and that these changes lead to better writing performances over time.

- Mike Edwards is studying the way feedback from peers and from the instructor – aided by Eli’s detailed student reports – can change writers’ habits for the better. Mike’s former colleague at West Point, Paul Johnston, is similarly focused on changing writers’ habits as reviewers as a means to improve their writing and their leadership skills.

- Susheela Varghese & Jennifer Estava-Davis at Singapore Management University are working to discover linkages between specific writing behaviors – e.g. revisions guided by feedback on higher-order vs. lower-order concerns – and improve over time in written products.

- Researching in your own class – in addition to working with large data sets, instructors can export data in small batches after single activities and do interesting things like word cloud visualizations or hacking the review comment digest.

Our work with colleagues at Computers & Writing in 2010 and 2012 helped us arrive at this point, but we’ll be presenting in session #g5 about our work on research databases and how we might effectively design a corpus builder using Eli Review data:

We routinely say that Eli Review was built for teachers, by teachers, but our commitment isn’t a cynical marketing ploy. The truth is, we are teacher-scholars who work with other teacher-scholars. We do this because we are heavily invested in our professional communities and in advancing the field’s knowledge about how people learn and about teaching best practices.

All of the colleagues mentioned in the list above work with members of the Eli team as we would do in other research situations. Eli’s ability to help researchers understand learning in writing better by offering access to one-of-a-kind data is an opportunity we take very seriously. If you want to research in your own class, or organize a research initiative in your program, tell us – we’re listening, and your input might just lead to a new feature that could help other teachers, too.