Eli Review is more than just a web application. We partner with institutions to help instructors create feedback-rich classrooms that engage students and achieve learning goals. At Singapore Management University, our partnership began a year ago in the design of a pilot for the Programme in Writing and Reasoning (PWR)—comparable to a 2nd semester first-year composition course in the US. Bill Hart-Davidson worked closely with Jennifer Estava Davis and Susheela Varghese to design a course around new objectives; in those courses, Eli’s analytics measure students’ achievement. (Stay tuned for reports from that exciting research!) Bill and Melissa Meeks also reviewed new writing assignments in the PWR curriculum and offered advice about how to prepare other staff members to be successful with Eli this fall.

This Fall, 1800 students at SMU will be using Eli in the PWR program. The richness of our year-long conversation also inspired Susheela Varghese to pilot Eli in another course, this time in the MBA program. She brought Melissa quite a challenge. MBA students in “Influence” Comm 637 course meet for just eight Saturdays. Developing students’ ability to give outstanding feedback and to use revision advice to improve their own work was a goal that meshed well with the program’s mission to prepare leaders for the business world.

To get started, Susheela needed to turn a rubric that she described as “painful to use” into a set of more focused review tasks in Eli. She and Melissa worked together to get these ready for students, and they offer their observations and strategies here as a model of kinds of thinking that help instructors design effective reviews using Eli’s different response types.

Challenge: Transforming a Summative Rubric into a Formative Review Task

Susheela’s initial difficulty was that she had a summative assessment rubric. Summative feedback is great for grading, but it is by definition not formative feedback. When you put a summative rubric into a peer review system, you get peer-grading. But, Susheela wanted what Eli offers: peer learning with formative feedback for evidence-based teaching. Part of this transformation, then, was making sure that reviewers’ feedback was less evaluative and more descriptive, goal-directed, and goal-referenced.

Challenge: Finding a Focus for Each Round of Review and Revision

Susheela’s second problem was that she didn’t like her rubric. She told Melissa:

There are too many items in the trait identification component. I found it painful to use at the time since I would add one line of comment/evidence next to each trait, but I had already used it with some papers in that batch so I just persisted till I finished. . . . Any suggestions on how to cut this down?

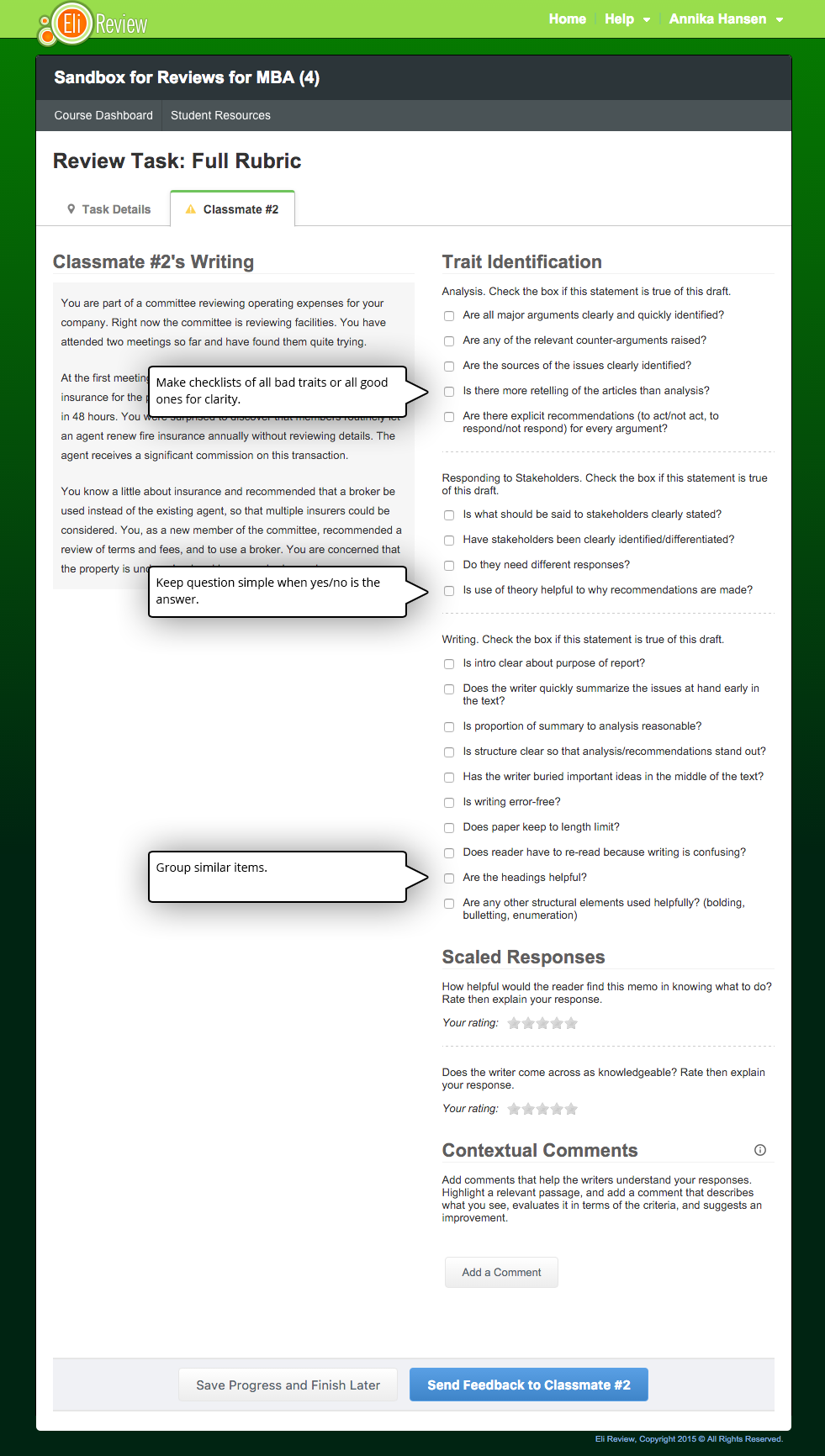

Here’s what Susheela’s comprehensive, thoughtful rubric would have looked liked dropped directly into Eli Review:

Feel agog after just skimming the questions? Imagine having to read 2-3 drafts AND give feedback in that review as a student who is just beginning to understand the criteria.

Talk about cognitive overload!

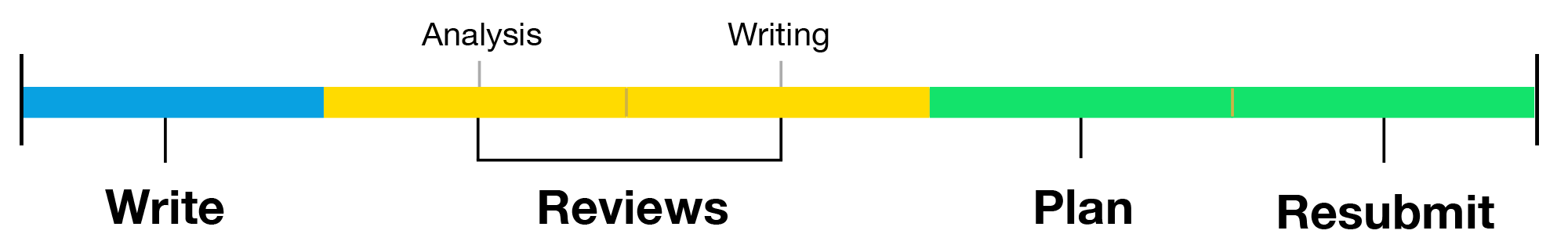

Susheela’s smart instinct was to divide the rubric into two reviews, one for analysis and one for writing. This better matches her learning goals for the assignment as well, which include helping students to become good critical readers as well as better writers.

This transformation results in a new workflow for students:

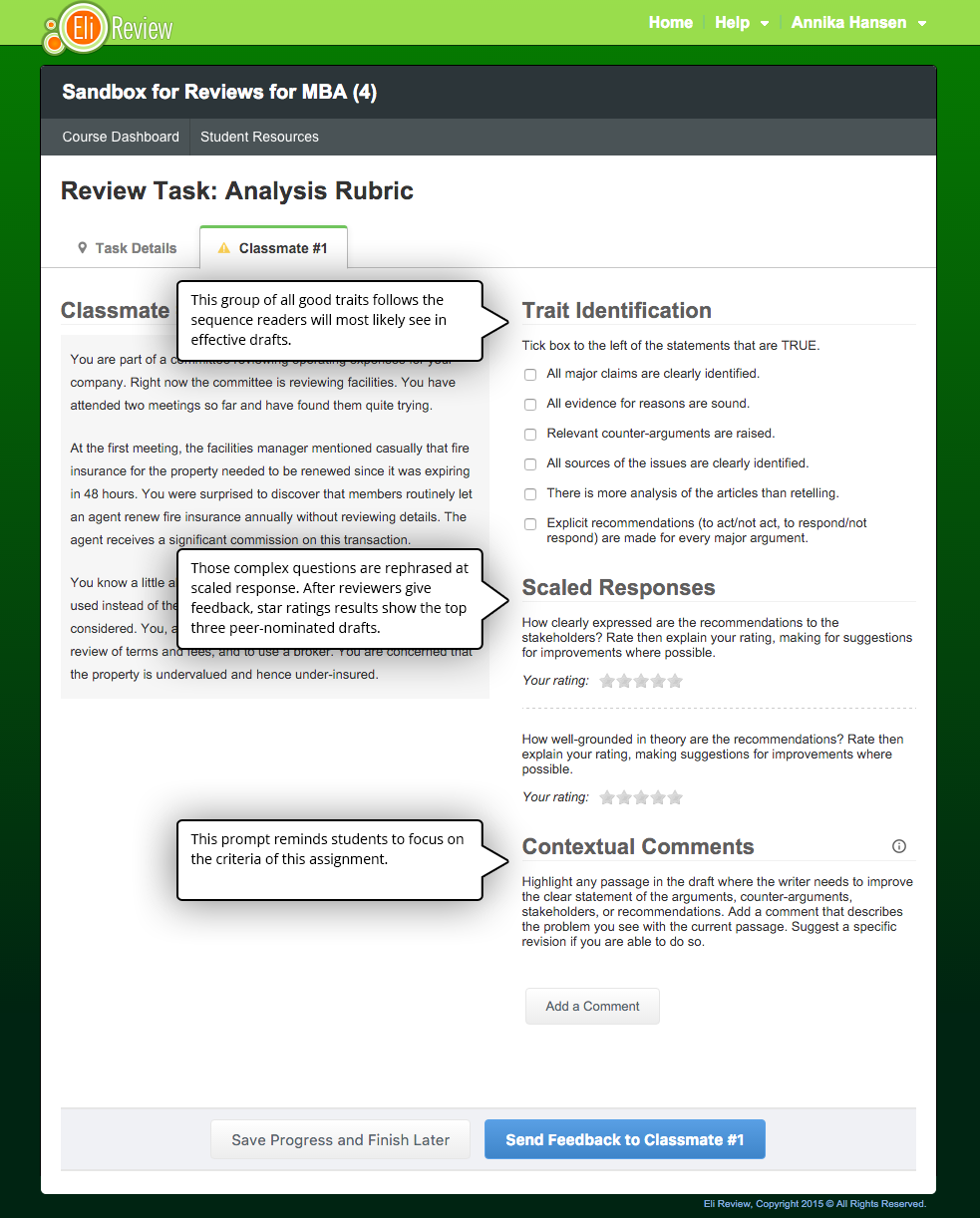

Following Susheela’s lead, Melissa reworked the rubric into two separate reviews, borrowing much of the language from the original.

This analysis review is more focused. It also scaffolds the way reviewers read the draft. The criteria checklist is ordered to reflect the sequence of moves writers should make in the draft, so looking for the criteria should help reviewers read the full draft fluidly. Having looked for the criteria and read the whole draft, reviewers are better positioned to make complex judgments about the clarity of recommendations and the useful application of theory. To encourage students to focus on analysis, the prompt reminds them to give helpful, goal-referenced comments.

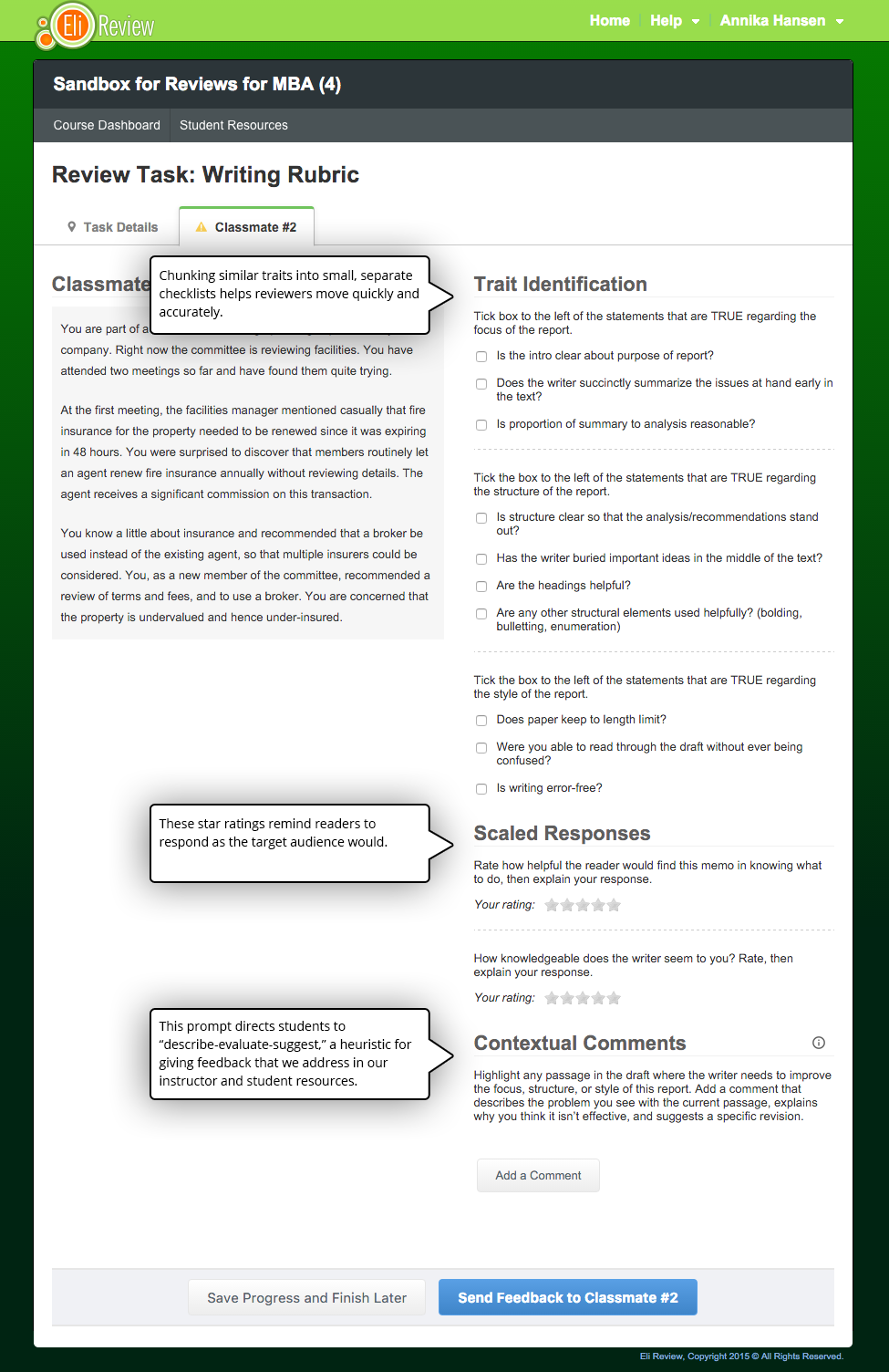

Similarly, the writing review simplifies the task by grouping similar traits in separate checklists. The ratings also encourage reviewers to respond as readers, as if they were the intended audience.

This two review sequence solves Susheela’s concerns about length and complexity without adding time to the class activity. In the time they would have done one comprehensive review, students can complete two streamlined reviews. These tasks also help reviewers read and respond to drafts with more description and more evaluation connected to suggestions. From this sequence, writers receive a substantial amount of quantitative feedback (from checklists and ratings) as well as qualitative feedback (from rating explanations and comments). Because the activities are bite-size and well-aligned, reviewers are also likely to give helpful, criteria-driven feedback.

Challenge: Getting Students to Transfer Skills to a New Communication Scenario

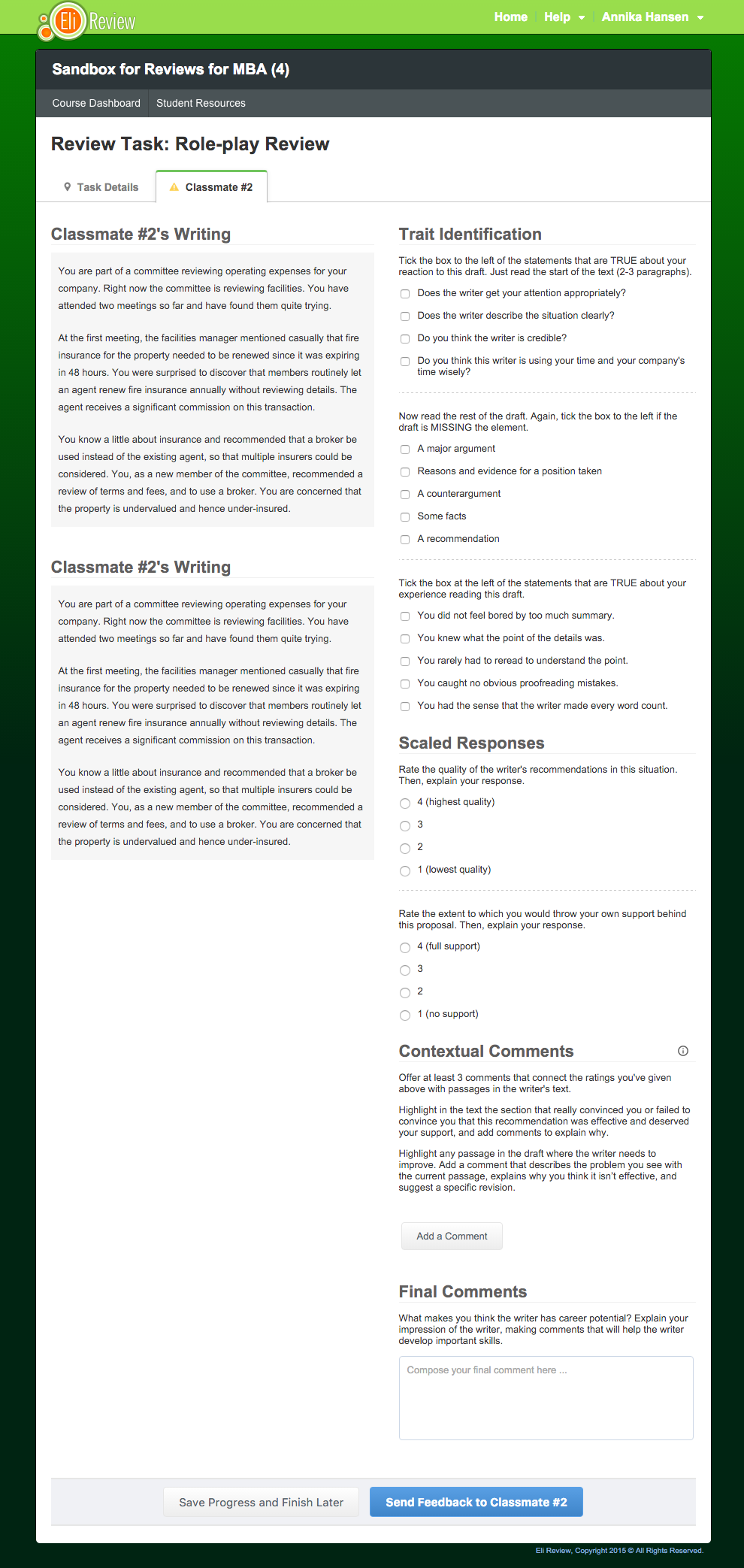

Though these two reviews would certainly work on their own, Melissa proposed a third review. She noted that Susheela’s case-based writing assignments were highly realistic. Writers were asked to imagine communicating to colleagues in a particular corporation about a very specific set of problems. The reviews, however, were very generic because they borrowed from the rubric’s language. Teacher talk doesn’t work at work. So, Melissa drafted a review that cast reviewers as the CEOs/supervisors receiving the communication from employees. She rephrased many of the criteria to better fit that reader role, and she omitted the criteria instructors can best evaluate (e.g., appropriate differentiation of stakeholders, helpful use of theory).

Melissa also added a new element: Reviewers in this roleplay had to indicate what potential for contributing to the organization they thought this writer had based on this draft, offering comments that would further that person’s career path. In the corporate world, that’s what good feedback does. Marshall Goldsmith calls it “feedforward”; he describes it as the way an evaluation should help an employee understand what to do next. Writing teachers Rysdam and Johnson-Shull connect “feedforward” with “revision-centered goals: information that facilitates future success as opposed to labeling past behavior” (83). The move from talking about writing to talking about writers is a difficult one for reviewers (and instructors), but this role-play helps.

Susheela ultimately chose to use the role-play review, and she reported, “Class just ended and Eli Review worked like a charm!”

Email Melissa Meeks if you’d like for her to consult on review task design or learn more about what it means to partner with Eli Review.

Goldsmith, Marshall. “Try Feedforward Instead of Feedback.” Marshall Goldsmith Library, Summer 2002. http://www.marshallgoldsmithlibrary.com/cim/articles_display.php?aid=110.

Rysdam, Sherri, and Lisa Johnson-Shull. “From Cruel to Collegial: Developing a Professional Ethic in Peer Response to Student Writing.” In Peer Pressure, Peer Power: Theory and Practice in Peer Review and Response for the Writing Classroom, edited by Steven J. Corbett, Michelle LaFrance, and Teagan E. Decker. Southlake, Texas: Fountainhead Press, 2014.