This document describes the different response types instructors can add to a Review Task. There are a couple of important points to keep in mind:

To learn more about review tasks in general, consult either the Instructor User Guide or the Student User Guide for more details.

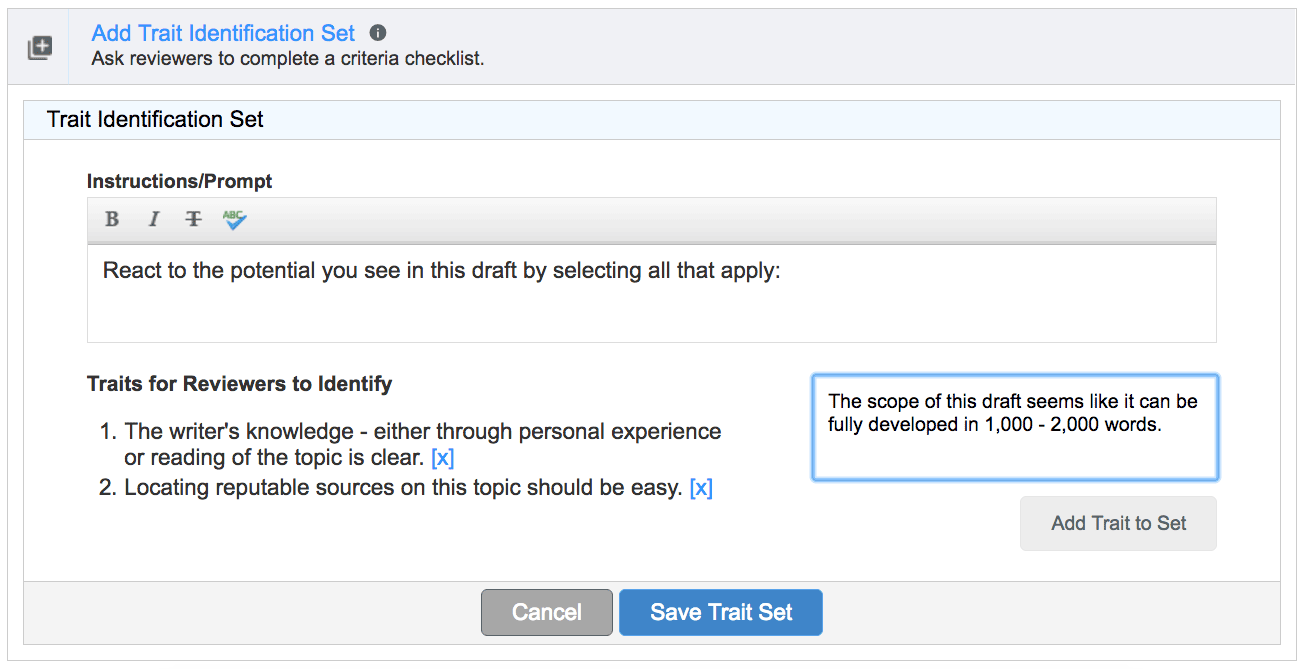

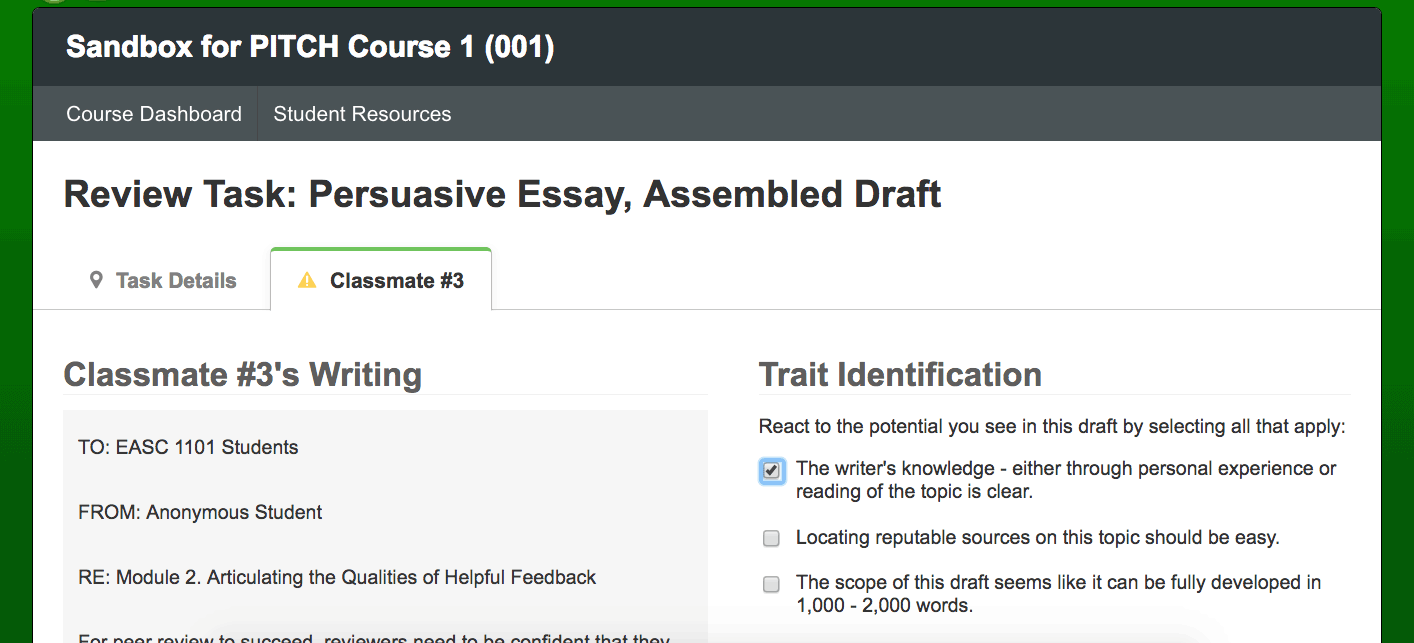

Trait identifications are checklists. Reviewers check the box to indicate that the draft includes the trait.

Example 1

Tick the box if the draft meets these minimum expectations:

Trait identification items offer writers formative feedback the helps them prioritize next steps for revision. The absence of required elements lets writers and instructors know what they might work on in the next draft. It also helps to indicate where they are meeting expectations when important traits are present.

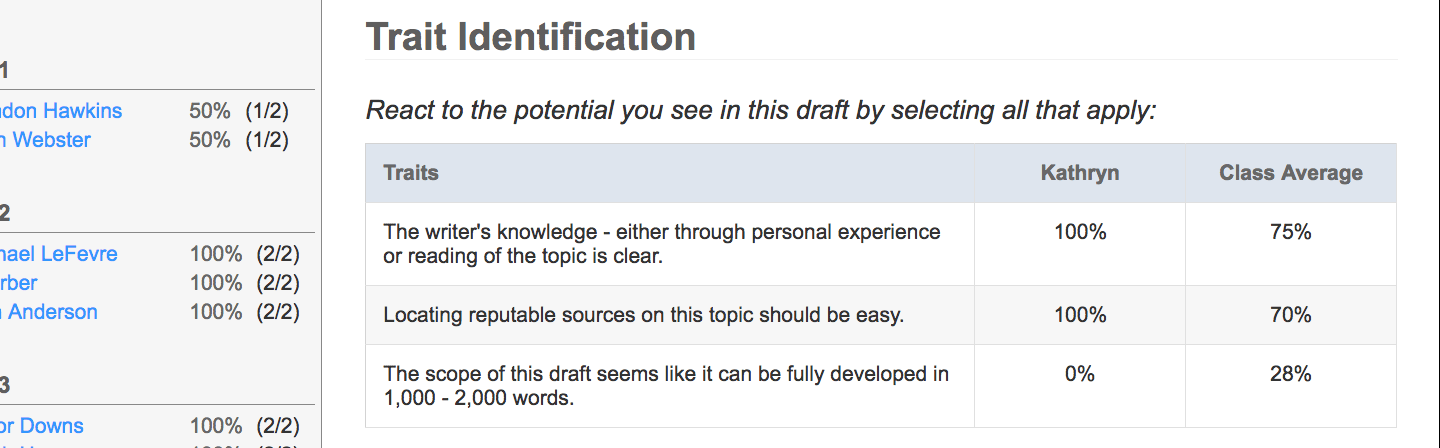

Trait identification elements are also aggregated for the whole class, which helps instructors see what kinds of issues to include when debriefing. If your review results page shows that 95% of your students have a full citation in their article summary, for instance, you know you can likely move on to work on something that a smaller percentage of students have mastered or prioritized in their drafts.

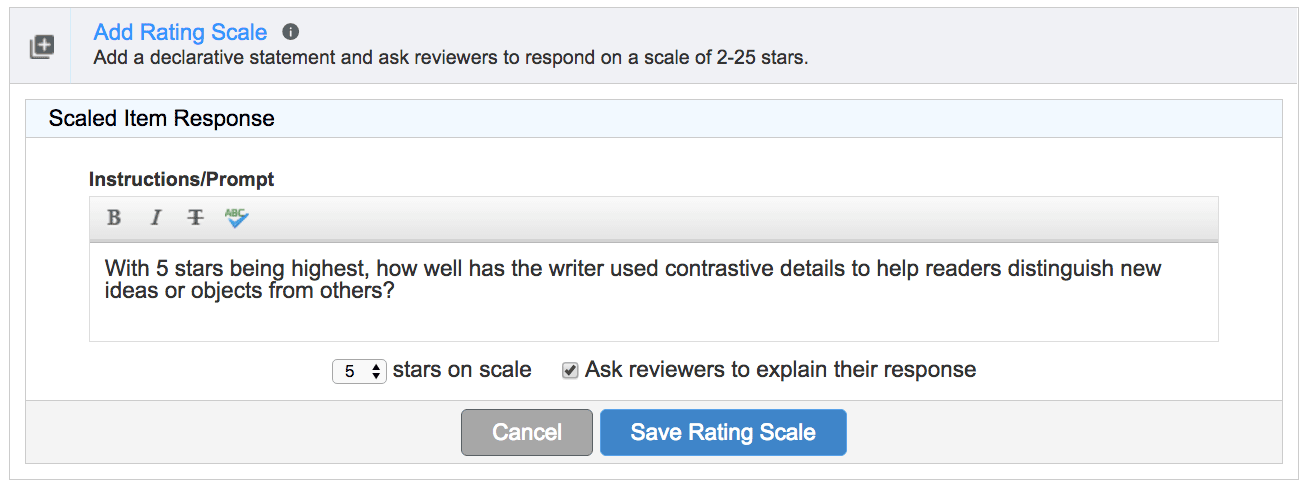

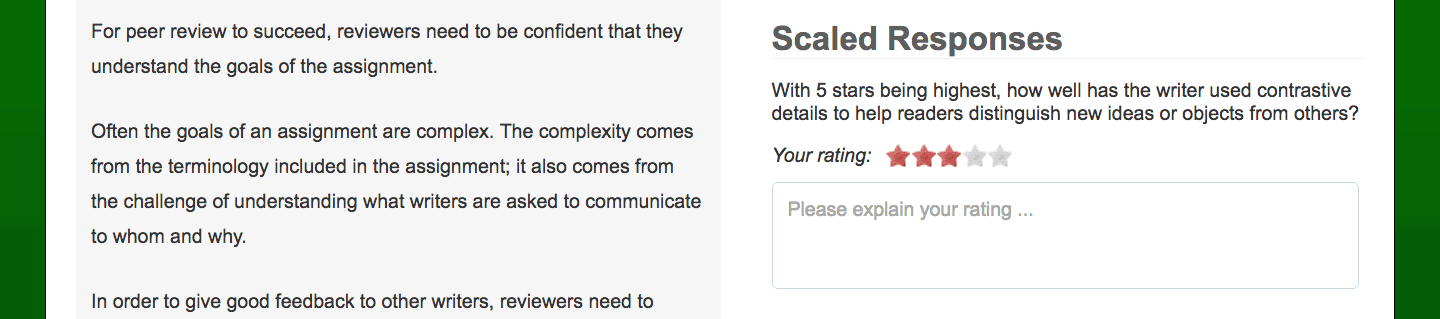

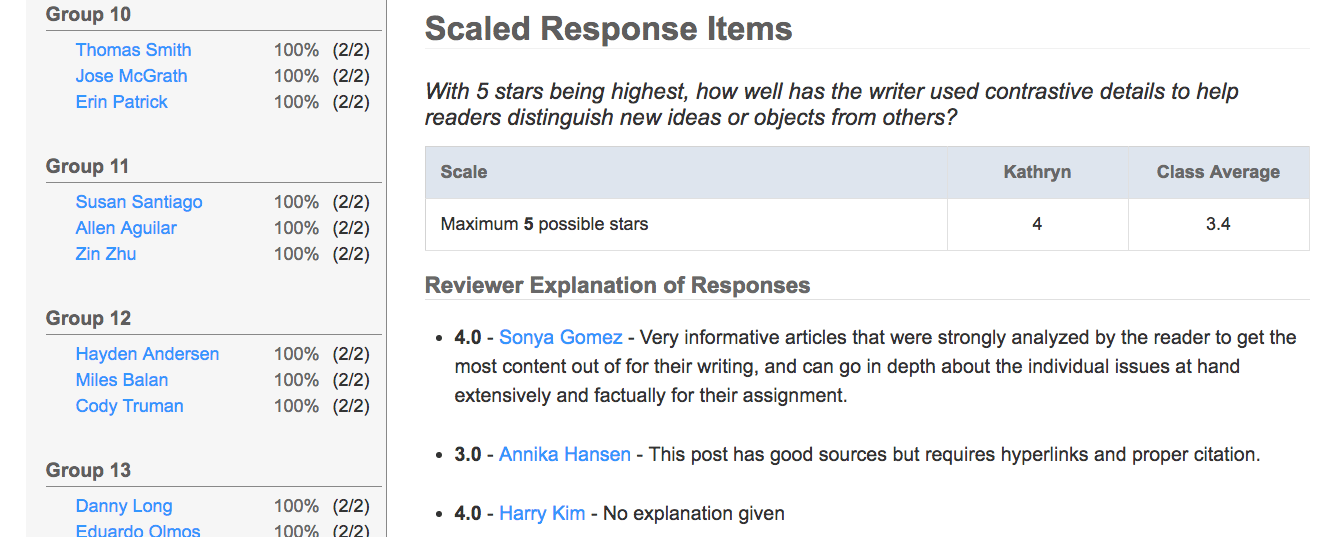

Scaled response items ask students to evaluate how well drafts meet criteria.

The prompt explains the scale, as shown in these three examples.

Example 2

On a scale of 1 to 5 with 1 being lowest and 5 highest, how confident are you that this thesis is specific, debatable, significant, and well-stated?

Example 3

Where 1 star=”meh” and 5 stars=”wow,” rate your reaction to this draft.

Example 4

Nominate this draft for class discussion.

5 stars=”Everyone can learn something from this one”

1 star=”Needs work before sharing”

Scaled response items are the most versatile, and they can be used to provide feedback ranging from formal to informal at just about any stage in the process.

Rating scales are aggregated for the whole group so the teacher can see class averages and compare the results of individual students with those of the whole group.

Eli identifies “peer exemplars” by showing the instructor those students whose work scores highest on scaled items. Instructors can use these peer exemplars during debriefing to showcase student success. The class can discuss the choices the writer made.

Keep in mind about scales:

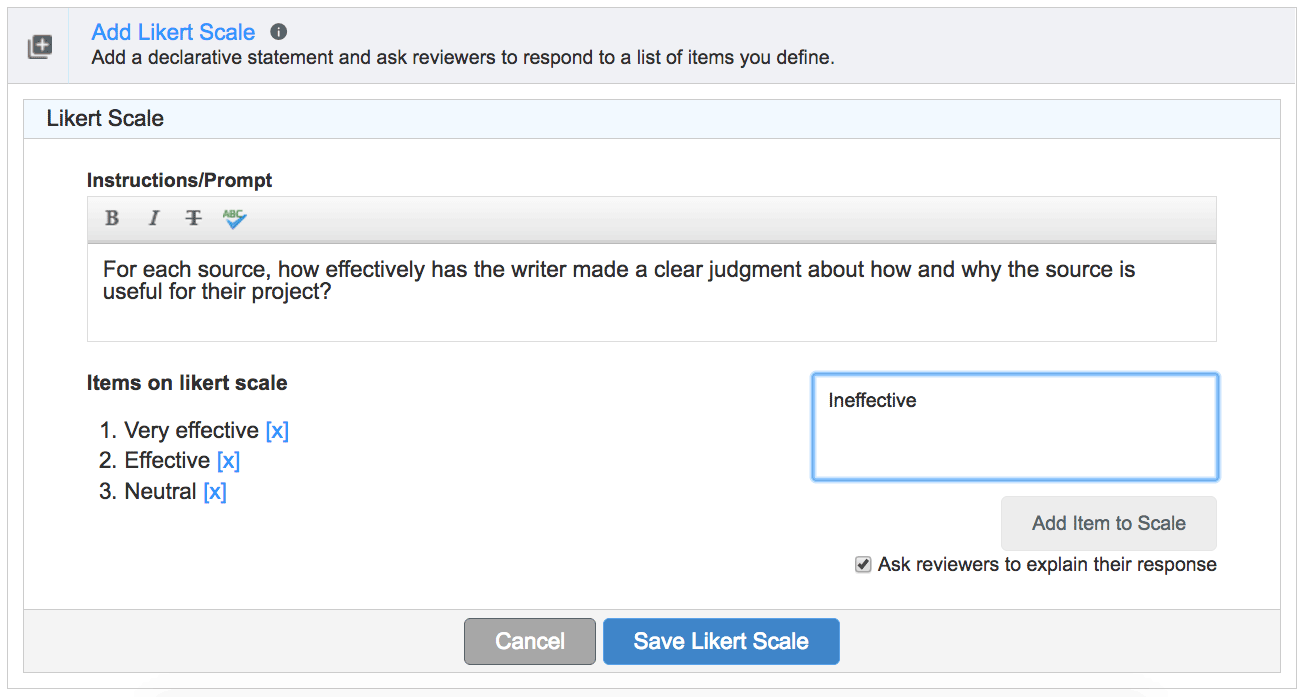

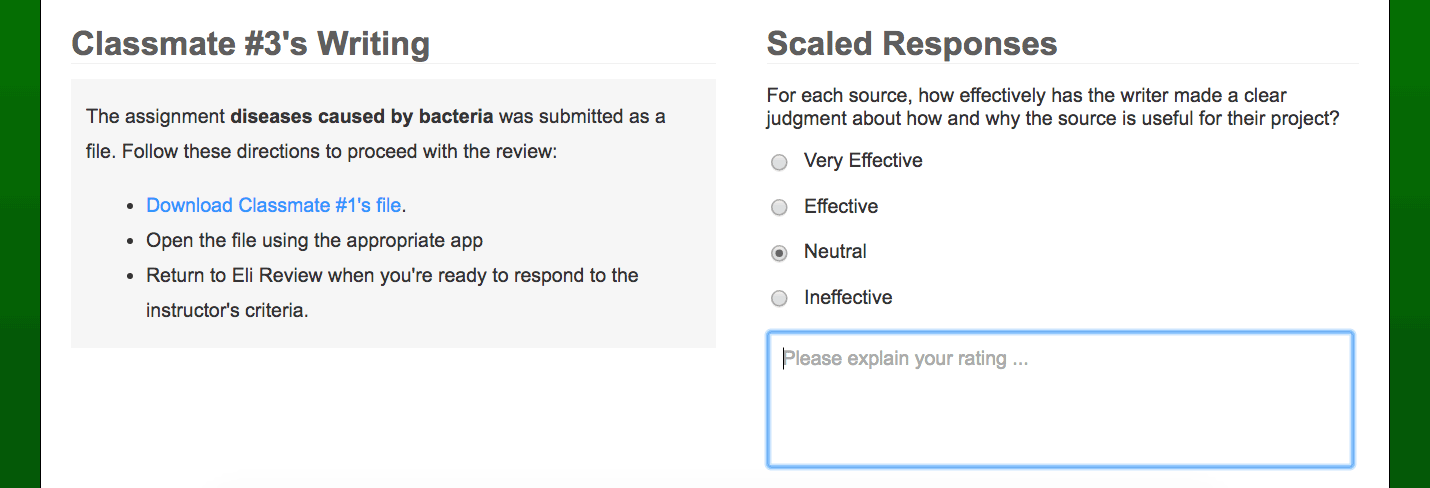

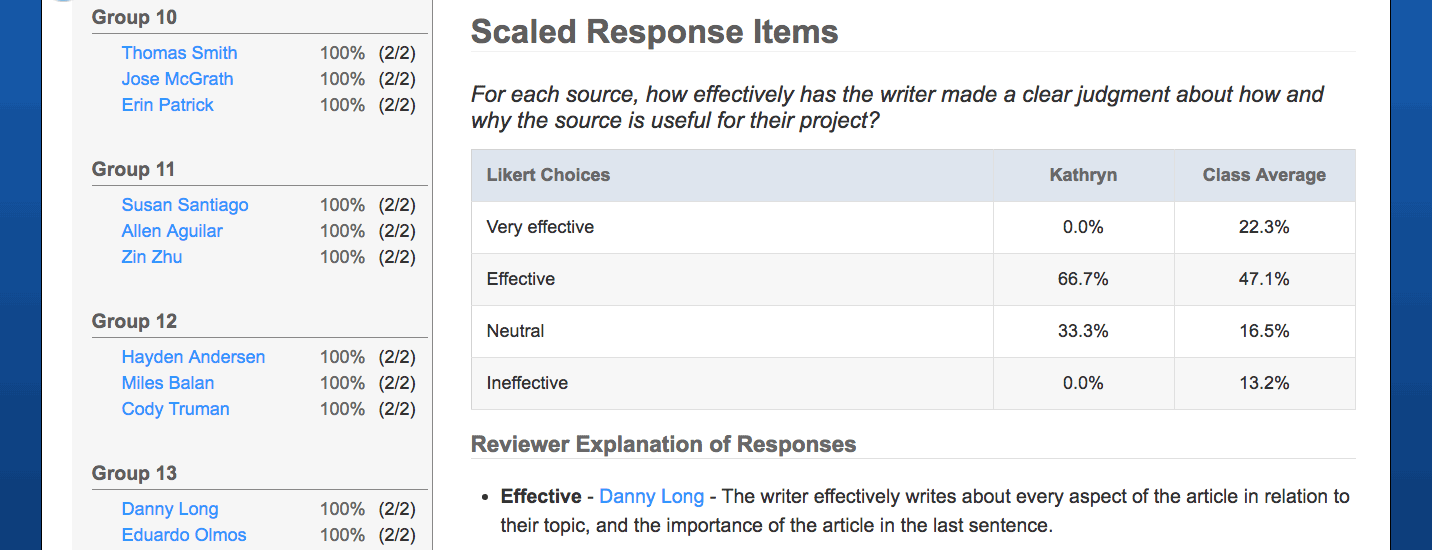

A Likert scale functions like a multiple choice question. If you have designed a grading rubric for your writing assignment, each row in your rubric can be a Likert scale.

Example 5

Choose the BEST description of how the writer addresses genre and disciplinary conventions:

Keep in mind about Likert scales:

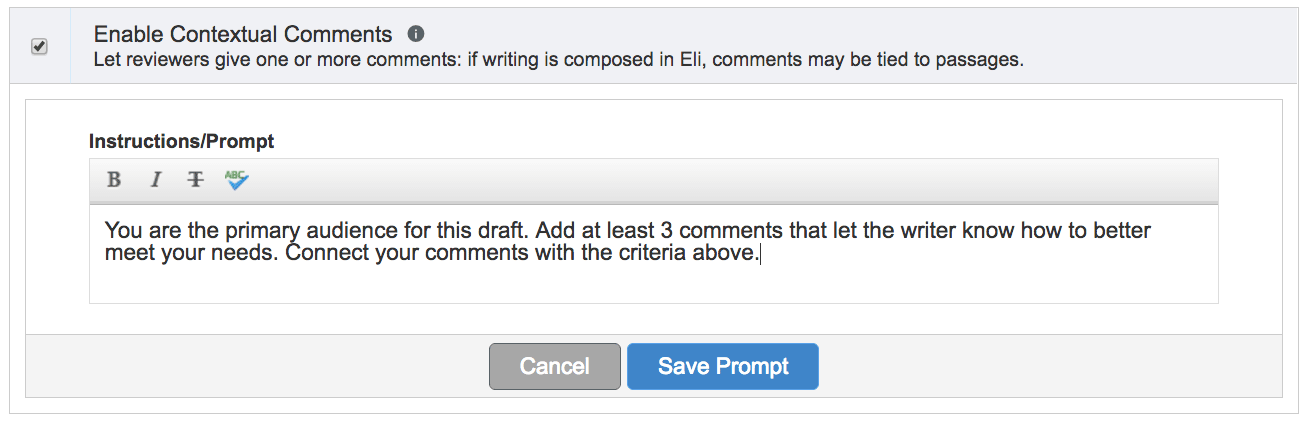

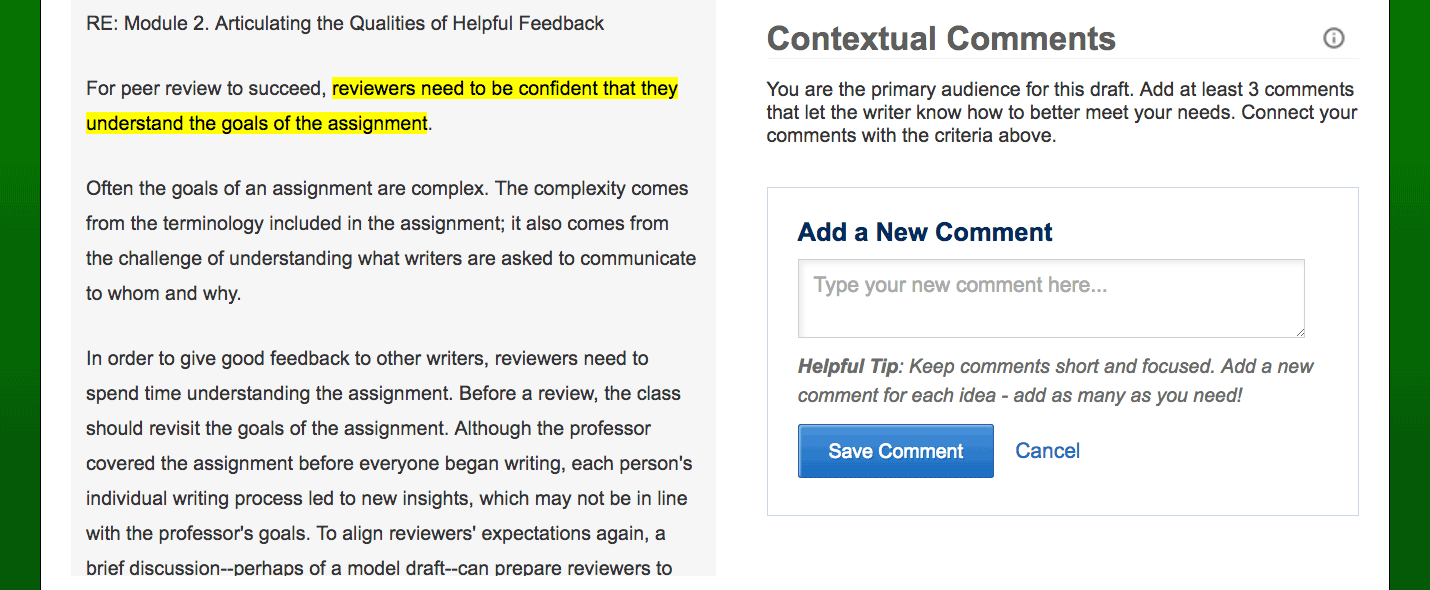

Comments are open-ended text responses. Comments can be connected to highlighted passages for assignments that have been typed or pasted into the Eli editor, or they can be made without highlighting. Instructors can prompt reviewers to offer specific types of feedback with a comment response if they like.

Example 6

Please add 3 comments.

Your comment should “describe-evaluate-suggest” (https://elireview.com/2016/08/03/describe-evaluate-suggest/):

–Describe what part of the draft you are talking about

–Evaluate it by naming which criteria you are talking about from the list above and saying if draft meets or does not meet the criteria

–Suggest a strategy or question or goal the writer should consider when revising.

Remember that you can:

(1) offer praise comments that encourage writers to apply a strength in one section to another section of the draft

(2) offer critique comments that point out a pattern that the writer can address in multiple places

(3) offer strategies such as “use your browser’s features to read your draft aloud, and make edits as you hear mistakes” to encourage a revision process

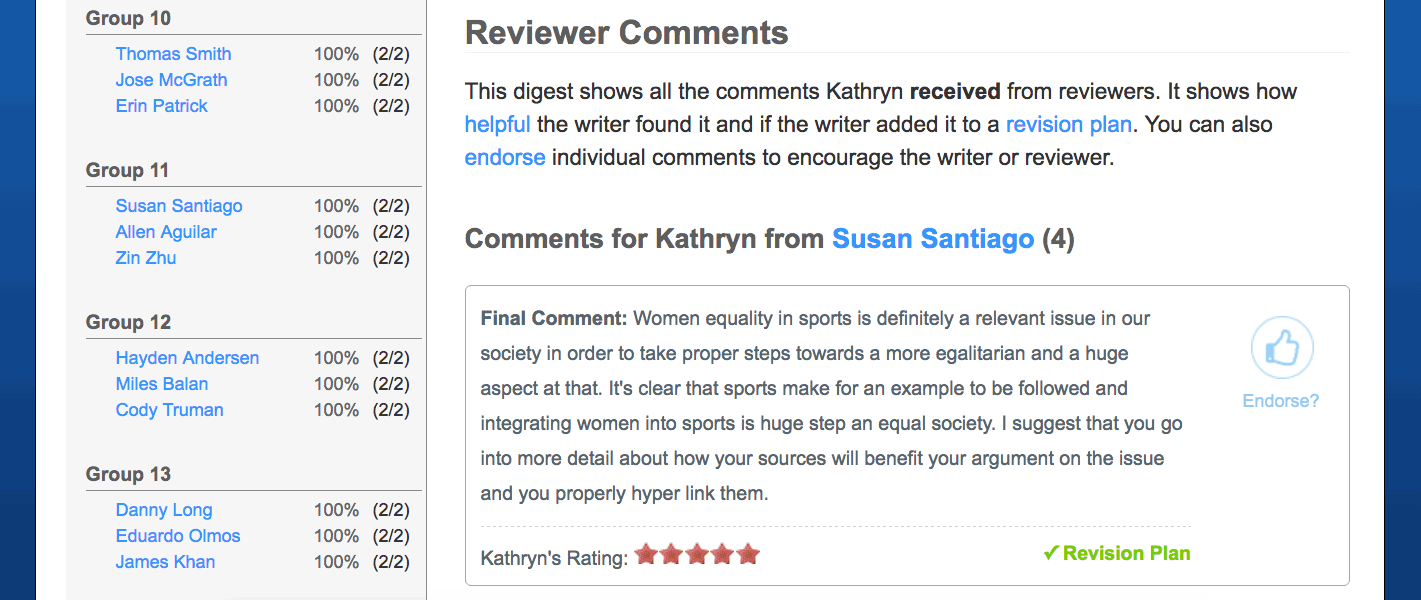

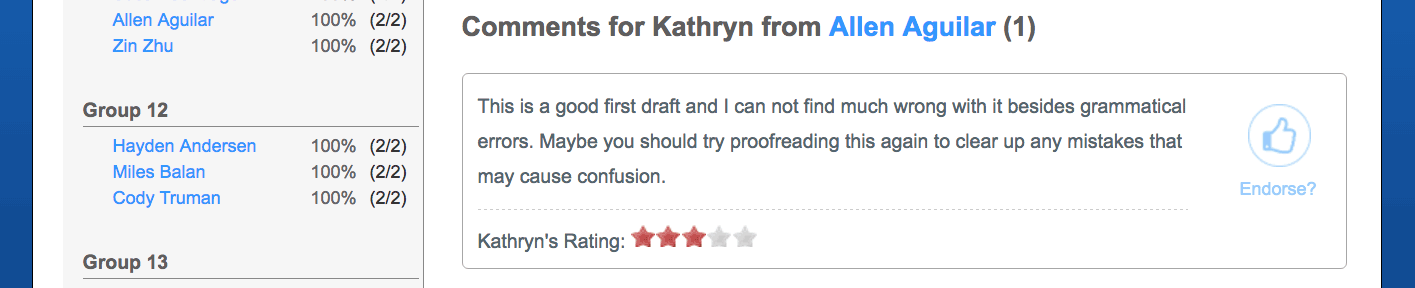

Eli displays comments in a browsable comment digest, making it easy to read through the feedback reviewers are giving writers.

Instructors can also endorse a comment by clicking a button. This allows the writer to see that the instructor thinks a particular comment is valuable, and it also allows the reviewer to see that she has made what the instructor feels is a helpful response.

Keep in mind about contextual comments:

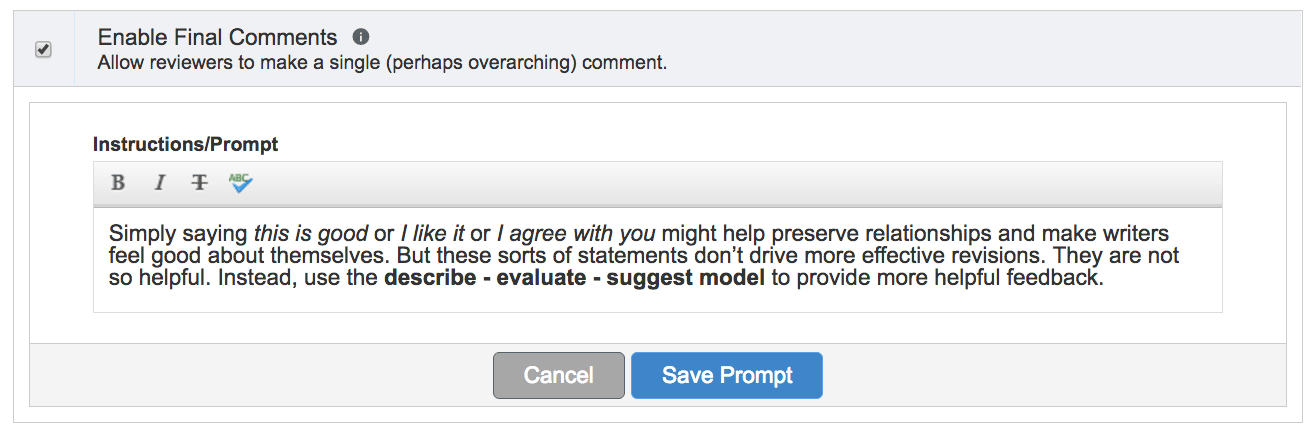

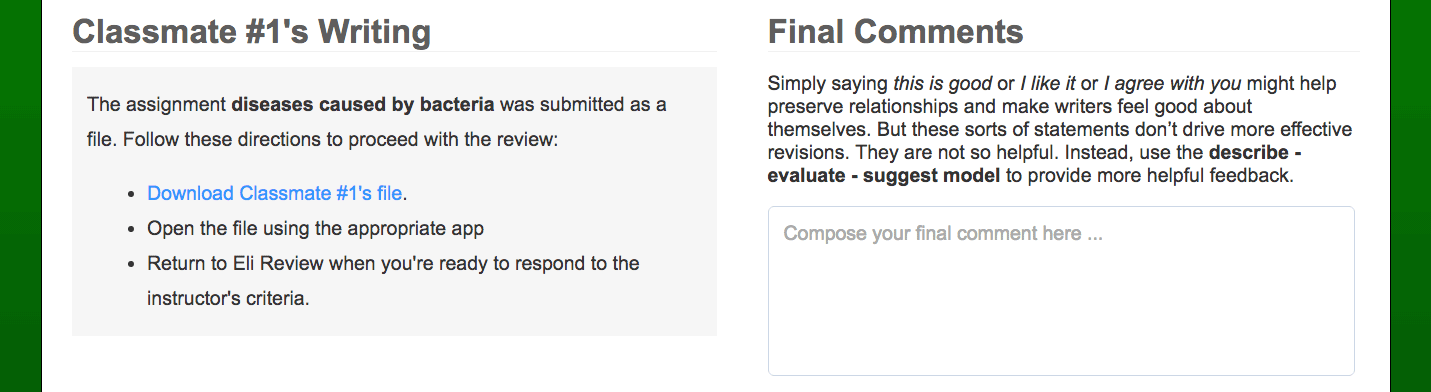

Final comments are only a single comment that cannot be tied to a highlighted passage.

Example 7

What do you think the writer should be most proud of about this draft?

Example 8

Assume the writer will invest 30 more minutes in revising. Recommend specific revision tasks and estimate the time for each. Your recommendations should total 30 minutes.

Example 9

What did you learn from this draft? You might discuss something about the topic or the writing style.

Keep in mind about Final Comments: