This is the third in the Investigating Your Own Course series.

Last Thursday we used the Highlights report from Eli Review’s Course Analytics feature to determine whether or not Bill Hart-Davidson had designed and facilitated a feedback-rich environment in his Technical Writing course. Today’s post is focused on where to look to gauge if the feedback-rich environment was helpful.

To continue following along using data from your own course, find your Highlights report by following these steps:

- Click on the name of a current or previous course from your Course Dashboard.

- From the course homepage, click the “Analytics” link. “Engagement Highlights” are shown first.

Question 2: To what extent was this feedback-rich environment helpful?

Last week’s post focused on simple counts and averages that helped us see the types of work students were assigned and the volume of their responses. Quantity can tell us a little bit about quality, however. This post will demonstrate ways that Eli Review analytics allow users to indicate quality. We’ll use these numbers to draw conclusions about how helpful writers and the instructor found the feedback-rich environment.

How helpful on average did writers find the feedback they received?

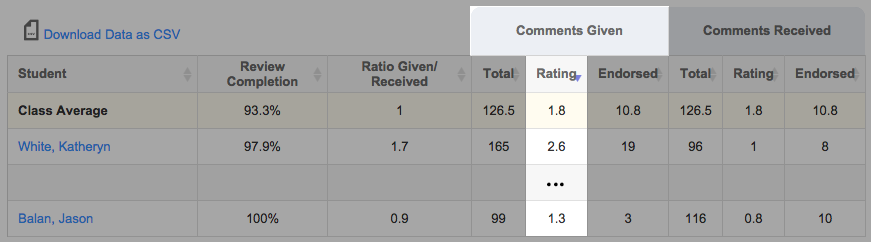

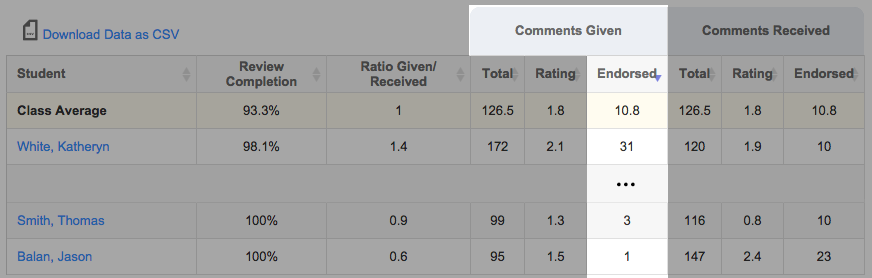

Writers can indicate how helpful they found comments they’ve received by rating a comment with 1-5 stars. Bill teaches his class to be as stingy with the stars as he is with endorsing. In the Comments Given group, the “Ratings” column tells us the helpfulness average across all reviews. The least helpful commenter averaged 1.1 stars whereas the most helpful commenter averaged 2.6 stars. Again, that’s a big gap — more than 1.3 stars difference!

Note: To see how an individual student compared to the top and bottom 30% of the class, additional student analytics can help Bill contextualize these helpfulness ratings.

How many comments received instructor endorsement?

The Highlights report also includes the count of the comments Bill endorsed. Endorsing is a way for instructors to tell writers that they agree with peers’ comments. Bill was intentionally really stingy about endorsing:

Another analytic shows that he endorsed only 1% of all the comments exchanged in the 13 reviews students completed. Bill’s approach provided a strong signal to writers about helpful feedback and to reviewers when they’d given good feedback. It’s quite telling that Bill endorsed 31 comments given by one student and just one comment given by another.

By looking at the quantity of comments he endorsed and at the average helpfulness rating according to the writers who received those comments, Bill can feel confident that writers found the feedback they received helpful.

So, to what extent was the feedback in Bill’s class helpful?

Using two metrics from the Highlights report in Bill’s class — average helpfulness of comments given and count of endorsed comments — we’re able to conclude that students had high expectations for helpful comments, which were often met.

But, there’s more to helpfulness than counts, right?

Sure. That’s why Eli Review provides additional ways to explore and export data:

- Student-level analytics, which give instructors a portfolio of student work

- Comment Digest, which is downloadable and useful for corpus research

- Trend Graphs, which show performance over time

These reports makes visible the learning students are doing while giving and receiving feedback. It’s formative feedback for Bill about how students are learning. It’s not everything, but it’s A LOT — at a glance, in real time, and at the end of a course.

In future installments of the Investigating Your Own Course series, we’ll look at more data in the Course Analytics features of Eli Review, including trend graphs, average student profiles, and comment digests.

We hope you’ll follow along with us and investigate your own classes too. Please share your findings on Facebook or Twitter with the hashtag #seelearning!

Interested in learning more?

- Want to learn more about interpreting your course data? See the Course Analytics user guide or read more about using formative feedback as evidence of learning.

- New to Eli Review? Here’s a breakdown of how to get started.

- Want to talk to a human about how to interpret your course data or how to get started? Contact Melissa Meeks, director of professional development.