Eli was designed as a platform to help instructors improve peer feedback; one of our co-founders has famously said “you can’t teach what you can’t see”.

Today we’re releasing the browsable comment digest for review tasks, a feature meant to make it easier than ever to see the feedback exchanged by writers during feedback. We’ve long had this data available as a downloadable CSV file, but now it’s much easier to scroll through reviewer comments in real time to find exemplars, to make endorsements, and to coach feedback.

NOTE – these new features are retroactive, so instructors can go into reviews from old courses and browse through the feedback in past review activities.

Review Report – “Writing Feedback” tab

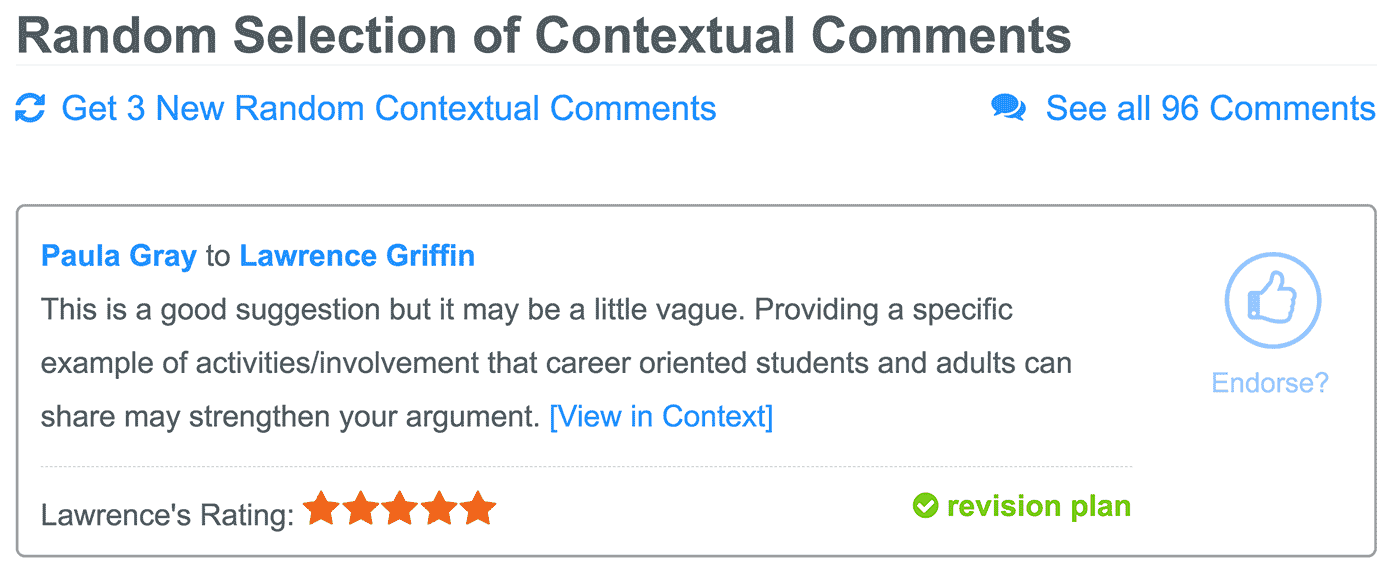

One of the first places instructors will notice a change is on the Writing Feedback tab of a review report.

Before, the random selection of contextual and final comments only allowed instructors to see the comments. Now, the selection of comments has all the interactive features. This view shows writers’ decisions about helpfulness ratings and whether to add it to the revision plan. And, instructors can give that blue thumbs up endorsement.

The option to “Get 3 New Random Comments” is particularly useful for in-class peer reviews, so that instructors can monitor the conversation before reviewers send feedback to writers.

Finally, there’s a link in this section of the report that will open the complete comment digest; this link goes to the section of the Review Feedback tab that includes all reviewer comments (see below).

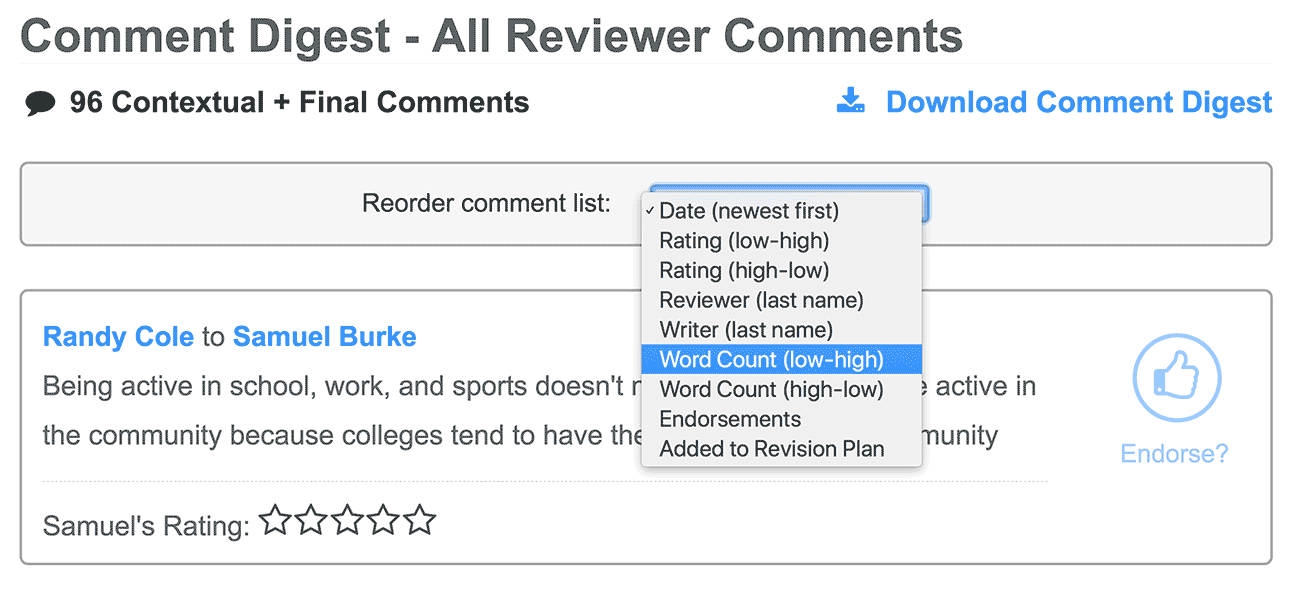

Review Report – “Review Feedback” tab

In contrast to the Writing Feedback tab which only shows 3 comments at a time, the Review Feedback report shows every single comment exchanged by reviewers in an activity. The default sort order of the list is by time sent, so the newest comments are shown at the top of the list. A new sorting menu lets instructors read through comments according to:

- Comment date / time (newest comments listed first by default)

- Rating (low/high or high/low)

- Word count (low/high or high/low)

- Reviewer last name

- Writer last name

- Endorsements

- Added to Revision Plan

Sorting the comment digest in these ways can help answer these questions, but it can also help identify exemplars for class discussion. For example, comments with a higher word count are more likely to exhibit the describe-evaluate-suggest heuristic, making them prime candidates for modeling helpful feedback.

Does seeing feedback in this way help you teach feedback to your students? Can Eli do more to help you see student learning? We’d love to hear your feedback – please email or tweet at us!