This post is a part of the series Challenges and Opportunities in Peer Learning.

In this blog series, we’ve tackled the challenges of peer learning. We’ve encouraged teachers to see each challenge as an opportunity to design for learning and offered strategies that can lead to more effective peer feedback and revision.

Some challenges start with instructors. We can schedule too little practice, overwrite peer feedback with instructor feedback, misjudge the thing students need the most help with, and be unaware of the routines we rely on that students don’t have. To make peer review most effective, instructors have to approach their role and goals differently.

Some challenges come from students who are disengaged, unsure, and resistant. Those students tend to be a minority. Most students do the work, and some work really hard. That mix of effort leads to the most persistent problem in peer learning: a lack of reciprocity.

Peer learning depends upon student engagement. As an instructor, you can design an effective review around specific learning indicators, but that well-designed task will only work if students engage. And, it will work best if students are engaging at roughly the same levels.

Design Challenge: Overachievers pout; slackers sponge.

We use the labels “overachievers” and “slackers” to emphasize the stereotypes and to call attention to the ways these labels oversimplify the factors influencing the student behaviors we observe. The labels highlight that student engagement usually shows extremes in both directions: reviewers who give far more comments than others and those who give far less.

No matter why students comment excessively or sparingly, both types contribute to a dissatisfying learning experience.

- Those giving too much feel slighted. Even though the act of giving feedback likely leads to the most learning, not getting feedback on their own work is frustrating for otherwise motivated students. Going forward, they could be less likely to engage.

- Those giving too little still get lots of feedback. There’s no immediate consequence for giving less feedback than they got. Going forward, there’s little incentive for them to engage more.

Reciprocity is best.

Design Opportunity: Demonstrate opportunity costs.

Students’ work in peer learning reflects their cost/benefit analysis of participating. Students will work harder if they fear missing out.

The costs of skipping peer learning out can be high:

- no exposure to how peers responded to the writing task;

- no feedback;

- no opportunities to work through confusion about the criteria;

- no opportunities to practice thinking through problems and solutions;

- no points for submitting too few helpful comments.

But, even high costs may not convince students to do the work unless they value the benefits:

- getting better by being exposed to a wide range of drafts;

- getting better because of feedback received;

- getting better as reviewer who can notice how drafts meet criteria and talk about that;

- getting better as a writers because of “giver’s gain” from being a good reviewer;

- getting points for submitting enough helpful comments.

Our experience has been that students contribute to peer learning based on their sense of how beneficial giving comments is to them personally. We have to help students see the connections between their effort as a reviewers and their own improvement.

Strategy: Emphasize giver’s gain.

In Making a Horse Drink, we used the phrase “giver’s gain” to represent the way being a helpful reviewer leads to better writing. As reviewers notice what other writers have achieved or what they are missing, they can apply those insights to their own work. Giver’s gain can be as a simple as a reviewer saying, “You are missing this requirement; oh, I think I left it out too!”

Our student resource on Feedback and Improvement begins with this claim about giver’s gain:

Giving feedback also helps us grow because we get better at noticing how writers’ choices are affecting us as readers. As we distinguish between successful and less successful strategies, we improve our skills as reviewers. There’s also an additional benefit for our work as writers: As we see strategies used well by others, we expand our set of effective writing tools.

When reviewers read others’ drafts, they learn. University of Rhode Island’s Director of Writing Nedra Reynolds reports that her students value peer learning first because of what they learn as readers:

From what my students tell me, it’s not just the comments they receive, it’s also the idea of seeing three other writers’ drafts. That’s the first thing they notice is just reading the other drafts is useful. Then responding to the prompts adds a whole other layer of usefulness or commentary.

As Nedra explains, when students bring the criteria into focus as they read and give feedback to other writers, they learn more.

Empirical research on peer learning corroborates giver’s gain too. In Making a Horse Drink, we highlighted four studies where the writers who improved the most were also the most engaged reviewers.

Giver’s gain is a downstream benefit of giving feedback, though. Students have to engage long enough to improve as a reviewers. They also have to offer enough feedback to push themselves beyond the things they’ve always been comfortable saying to other writers. Once they start to notice new things about others’ work and feel comfortable offering a suggestion, they’ll be able to apply those insights in their own work.

Students tend to overlook giver’s gain, however, when they are frustrated by a lack of reciprocity. Plus, students who fully engage in giving feedback early in the term see downstream benefits first, so those who are holding back are slower to realize what they are missing out on. Here are several tips for calling attention to how the work students do as reviewers translates into their improvement as writers:

- In the first few class debriefing sessions, ask reviewers to name a suggestion they gave another writer that informed their revision plans. Students can read through the comments from Feedback Given tab in a completed review task and make a list of the types of writing problems they mentioned. Then, they can reflect on how noticing those writing problems influenced their own revisions. Told to look for giver’s gain, students are likely to find some way to apply advice they gave. Or, they’ll realize that they didn’t give any advice worth applying, and that’s a teachable moment too.

- Ask writers to expand their revision plan beyond the best comments they received. In “Reducing the Noise,” we explained that instructors can stage revision plans to hold students accountable for all the feedback in the environment:

- reading peers’ drafts

- hearing models discussed in class

- giving feedback to peers

- hearing the most helpful comments discussed in class

- hearing the instructor debrief checklists and ratings in class

- participating in other class activities

- Instructors can identify exemplar reviewers and explain how those insights are also visible in the students’ drafts.

- Instructors can analyze comment patterns against rubric grades. Download the comment digest for the final review in project, add column called “Types of Comments,” and then label each comment in the digest. Crunch the numbers and make a pie chart to see what reviewers’ priorities were. Compare those priorities with students rubric grades, if you have them. For example, if 40% of reviewers comments focused on evidence and more students earned more points for the quality of evidence in their final papers, that’s an indication of giver’s gain.

Underscoring the skills and benefits reviewers gain through the process helps students understand why the work they are doing to help other writers also helps them. The personal benefit can motivate students to engage more.

Design Opportunity: Defining how much is enough.

Because we understand students’ engagement in peer learning to be a calculation of costs and benefits, we also understand the quantity of comments they give to be one indicator of their beliefs about how much is enough. Students at the extremes of the engagement continuum behave in accordance with what they perceive to be the norm. Overachievers might give tons more comments than others because they perceive themselves to be giving just enough. Slackers probably give too few from the same belief.

Analytics let instructors confront both misconceptions. By talking with the whole class about the peer norms during a review, instructors can help students gauge their efforts. Eli’s analytics for comments give instructors several strategies for shaping this conversation.

Strategy: Look for a Given/Received Ratio between 0.8-1.2.

Eli’s analytics allow instructors to gauge individual student’s reciprocity through given/received ratios:

- A ratio of 1 means students are truly giving one comment and getting one comment.

- A ratio between 0.8-1.2 is pretty close to reciprocal.

- A ratio below 0.8 means reviewers need to comment more more.

- A ratio above 1.2 means reviewers might need to offer fewer comments.

Here are three methods to use Eli Review’s analytics to monitor reciprocity in total number of comments:

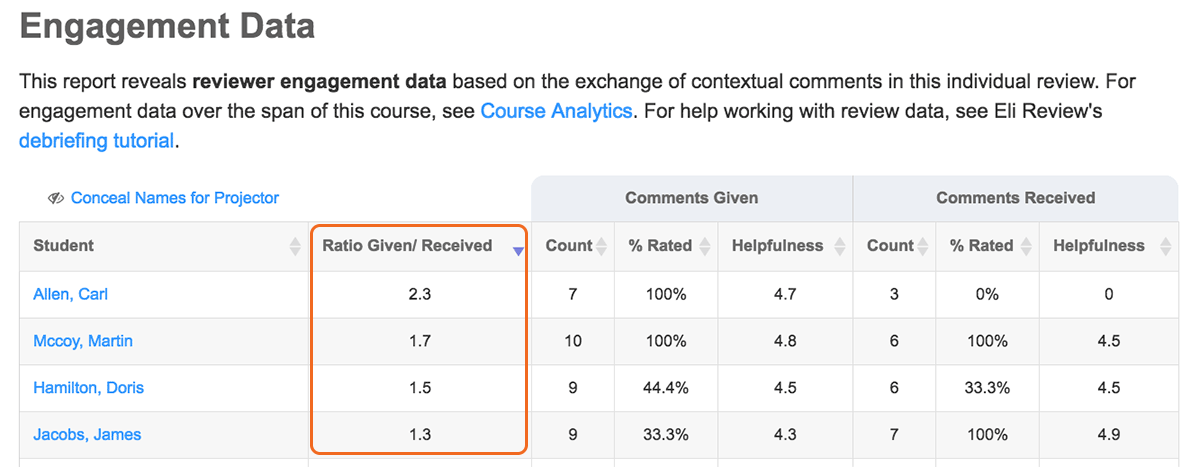

Method 1. Within a single review, instructors can check the Given/Received Ratio in the Engagement Tab. This ratio indicates within-group reciprocity. It shows if students gave as many comments as those they were partnered with.

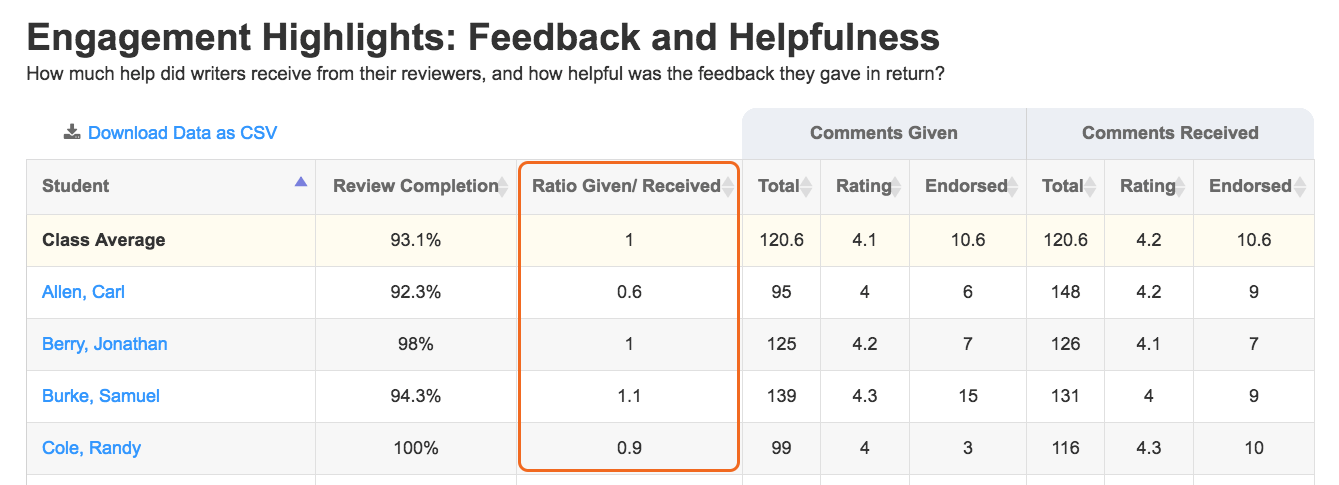

Method 2. Across all reviews, instructors can check the Engagement Highlights from the Analytics tab to see cumulative reciprocity to-date. This ratio cuts across groups. Be aware that reviewers who were in larger groups (e.g., 4 peers in group of 5) had more opportunities to give comments than those in smaller groups (2 peers in a group of 3).

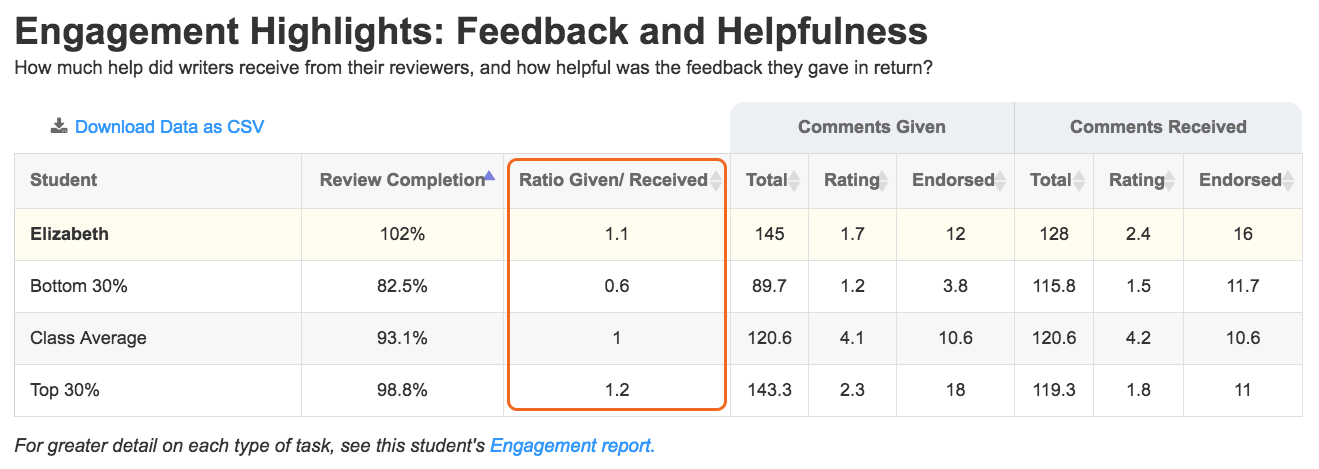

Method 3. Per student across all reviews, instructors can see how an individual student compares to the class average, the top 30%, and bottom 30% by clicking a student’s name in Analytics or in the Roster.

This ratio of given/received comments provides a uniform metric for checking reciprocity. It shows a student’s within-group reciprocity ratio for each review.

Be careful, though: a ratio is the same for the student who gave 8 and received 6 comments is the same as the student who gave 4 and received 3, even though the first student gave and received double the comments as the second student.

Strategy: Announce average number of comments given.

Because ratios don’t reflect overall totals, instructors should also check the averages. When trying to create a “give one comment; get one comment” environment, being near the average is a good goal.

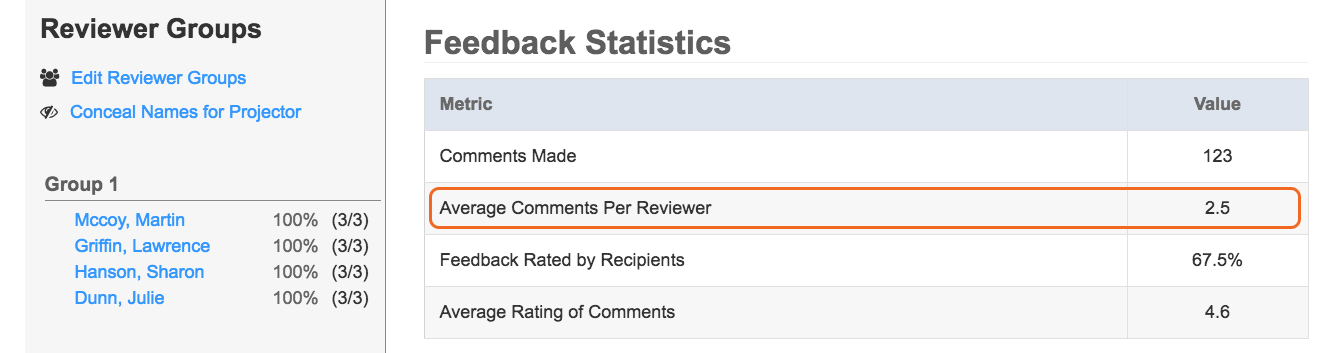

Eli shows average comments given to students and instructors in the Feedback Statistics portion of the Review Feedback tab in a review task.

Reviewers who are below the average need to offer more comments next time. Labeling these students as “slackers” who need to be more motivated might not be accurate. Sometimes low commenters don’t know what to say. These students may be challenged to think of what to suggest. Regularly modeling helpful comments and even including comment templates in the review task prompt can encourage these reviewers to offer more feedback.

Reviewers who are above the average may need to scale back on how many comments they give. These overachieving students need to prioritize the feedback they offer so that they comment only on the most important aspects of the draft. They might be overwhelming writers with a too heavy hand. Also, the quantity of comments can be misleading. These students may be overachieving as editors focused on grammar and mechanics rather than giving helpful feedback that improves rhetorical sophistication, ideas, and organization. Encourage them to focus on giving fewer, more helpful comments instead.

In the Engagement data tab in a review, instructors can see all the scores that contribute to the average. By sorting the “Comments Given” column, they can see the full spread of scores.

- If the outliers are way out of synch with most of the class, that’s a great reason to have a private conversation with those students.

- If there are groups of students at the top, middle, and bottom, it’s easy to talk to the whole class about a desirable middle.

Norms are important in peer learning because students only have a window into their own experience within their groups. Groups can be really different. By talking to students about the patterns of engagement across the class, instructors can help students understand how to match the effort of those around them.

Strategy: Go deeper and check reciprocity in terms of word count.

Comment totals describe one dimension of reviewers’ effort in giving feedback, and comment word count can give that total more depth. To analyze comment word count, use a hack that pastes the comment digest download into a Google sheet template. The reports, which require some customization, include one about reciprocity based only on word count.

Details about how many words reviewers are writing in comments can help you assess how much they can say to writers about their drafts. If they can’t/won’t say much, students probably are struggling to learn the skill you are teaching.

Design Opportunity: Show how the skills transfer.

Giver’s gain has near-term downstream benefits, but there are also long-term benefits. Strong writers may need extra support in seeing those. And, everyone can be reminded that giving feedback is a leadership skill.

Strategy: Pay attention to strong writers.

Strong writers tend to have the least reciprocal peer learning experiences. Most reviewers have trouble responding to a skillful draft. These strong writers almost never get substantive feedback because reviewers who can see problems can’t see anything when there are no obvious ones.

Instructors need to pay special attention to strong writers not only because they are unlikely to get good feedback but also because they are critical to elevating the conversation in peer learning. If strong writers disengage from peer learning because they aren’t getting anything from it, the whole class suffers. Without their labor at giving excellent feedback, the instructor’s job of helping everyone improve is harder, if not impossible.

We find that three methods of paying attention to strong writers keep them engaged:

- Shuffle them into groups with equally strong writers or with particularly strong reviewers regularly.

- Reply to their revision plans in more depth and specificity (because peers can’t . . . yet).

- Oddly enough, shift attention away from strong writers’ drafts to their comments. These students need this kind of spiel:

The feedback you give others reveals what you explicitly know about writing; your draft can be written using merely your strong, practiced instincts. One day those will run out, so I’m trying to teach you skills that can help you confront novel rhetorical situations in your future professional life with the same kind of sophistication you produce effortlessly in school writing right now. Because your writing is so polished, I’m going to look most closely at your comments to others and your revision plans to see if you are showing your work as a reflective writer. Unlike most other writers, I don’t need your output to change, but I do need to see you work less from the gut and more from your brain in comments.

So much of the conversation about peer learning is about managing the disengaged, unskillful writers. But, it’s strong, disengaged writers who pose the most threat to successful peer learning.

Strategy: Cultivate leadership.

Although we mentioned this in a previous blog, it bears repeating Bill Hart-Davidson’s line: “The best leaders give the best feedback.” When students are learning to talk to each other about things they’ve left out, options they could take, and expectations they’ve not met, they are learning to be effective leaders. Knowing how to approach people with different skill levels and help them move forward is key.

Bottom line: Give one, get one. . . and more.

“Give one, get one” is the motto for reciprocal peer learning. Students who feel like they got back what they put in will be more satisfied with the process, so instructors need to communicate peer norms.

“Giver’s gain” in peer learning means that students also get more insight about writing from the process of giving feedback. Emphasizing the personal benefits of giving helpful feedback is particularly important for strong writers, who need extra attention since reviewers struggle to offer them helpful feedback.

By watching peer norms and class averages, instructors can do their best to close the reciprocity gap between the students with the highest and lowest engagement peer learning. Closing that gap and helping students understand why their contributions to others’ learning help them too helps students appreciate the process.